Strip Pooling:重构池化的空间注意力机制

本文引入strip pooling策略,以1×N或N×1长条形核实现池化,高效获取大范围感受野信息,并构建相关模块。基于此,使用Caltech101的16类数据集,对比ResNet50等经典模型,搭建含该注意力机制的TowerNet,经训练优化,在像素级预测任务中有效建模远程依赖,取得良好效果。

① 项目背景

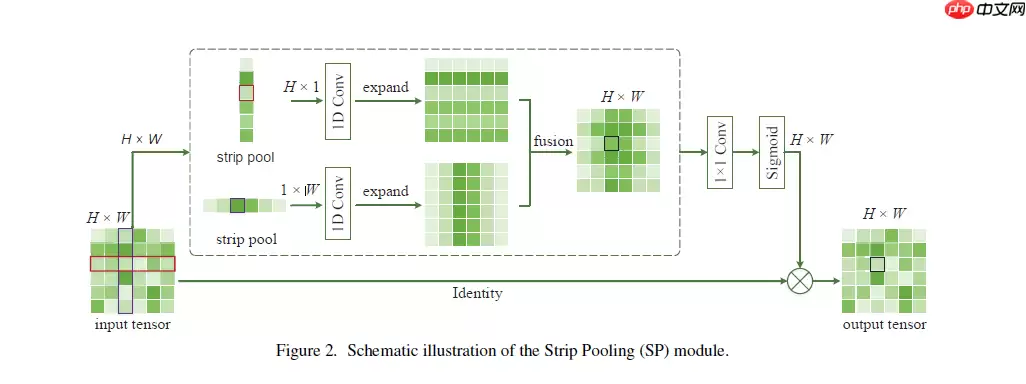

1.事实证明,空间池化在捕获用于场景解析等像素级预测任务的远程上下文信息方面非常有效。在本文中,除了通常具有N x N规则形状的常规空间池化之外,我们通过引入一种称为strip pooling的新池化策略来重新考虑空间池化的公式,该策略考虑了一个长而窄的核,即1 x N或N x1。基于strip pooling,我们进一步研究空间池化体系结构的设计方法。

2.池化操作是在逐像素预测任务中获取较大感受野范围较为高效的做法,传统一般采取N ∗N N* NN∗N的正规矩形区域进行池化,在这篇文章中引入了一种新的池化策略,就是使用长条形的池化kernel来实现池化,即是池化的核心被重新设计为构建了strip pooling操作。

3.这个操作的引入使得网络可以更加高效获取网络大范围感受野下的信息,在这个理念的基础上搭建了使用多个长条池化层构建的新模块。

论文地址:https://arxiv.org/pdf/2003.13328.pdf

② 数据准备

2.1 解压缩数据集

我们将网上获取的数据集以压缩包的方式上传到aistudio数据集中,并加载到我们的项目内。

在使用之前我们进行数据集压缩包的一个解压。

In [3]!unzip -oq /home/aistudio/data/data69664/Images.zip -d work/dataset登录后复制 In [4]

import paddleimport numpy as npfrom typing import Callable#参数配置config_parameters = { "class_dim": 16, #分类数 "target_path":"/home/aistudio/work/", 'train_image_dir': '/home/aistudio/work/trainImages', 'eval_image_dir': '/home/aistudio/work/evalImages', 'epochs':100, 'batch_size': 32, 'lr': 0.01}登录后复制 2.2 划分数据集

接下来我们使用标注好的文件进行数据集类的定义,方便后续模型训练使用。

In [5]import osimport shutiltrain_dir = config_parameters['train_image_dir']eval_dir = config_parameters['eval_image_dir']paths = os.listdir('work/dataset/Images')if not os.path.exists(train_dir): os.mkdir(train_dir)if not os.path.exists(eval_dir): os.mkdir(eval_dir)for path in paths: imgs_dir = os.listdir(os.path.join('work/dataset/Images', path)) target_train_dir = os.path.join(train_dir,path) target_eval_dir = os.path.join(eval_dir,path) if not os.path.exists(target_train_dir): os.mkdir(target_train_dir) if not os.path.exists(target_eval_dir): os.mkdir(target_eval_dir) for i in range(len(imgs_dir)): if ' ' in imgs_dir[i]: new_name = imgs_dir[i].replace(' ', '_') else: new_name = imgs_dir[i] target_train_path = os.path.join(target_train_dir, new_name) target_eval_path = os.path.join(target_eval_dir, new_name) if i % 5 == 0: shutil.copyfile(os.path.join(os.path.join('work/dataset/Images', path), imgs_dir[i]), target_eval_path) else: shutil.copyfile(os.path.join(os.path.join('work/dataset/Images', path), imgs_dir[i]), target_train_path)print('finished train val split!')登录后复制 finished train val split!登录后复制

2.3 数据集定义与数据集展示

2.3.1 数据集展示

我们先看一下解压缩后的数据集长成什么样子,对比分析经典模型在Caltech201抽取16类mini版数据集上的效果

In [7]import osimport randomfrom matplotlib import pyplot as pltfrom PIL import Imageimgs = []paths = os.listdir('work/dataset/Images')for path in paths: img_path = os.path.join('work/dataset/Images', path) if os.path.isdir(img_path): img_paths = os.listdir(img_path) img = Image.open(os.path.join(img_path, random.choice(img_paths))) imgs.append((img, path))f, ax = plt.subplots(4, 4, figsize=(12,12))for i, img in enumerate(imgs[:16]): ax[i//4, i%4].imshow(img[0]) ax[i//4, i%4].axis('off') ax[i//4, i%4].set_title('label: %s' % img[1])plt.show()登录后复制 登录后复制

2.3.2 导入数据集的定义实现

In [ ]#数据集的定义class Dataset(paddle.io.Dataset): """ 步骤一:继承paddle.io.Dataset类 """ def __init__(self, transforms: Callable, mode: str ='train'): """ 步骤二:实现构造函数,定义数据读取方式 """ super(Dataset, self).__init__() self.mode = mode self.transforms = transforms train_image_dir = config_parameters['train_image_dir'] eval_image_dir = config_parameters['eval_image_dir'] train_data_folder = paddle.vision.DatasetFolder(train_image_dir) eval_data_folder = paddle.vision.DatasetFolder(eval_image_dir) if self.mode == 'train': self.data = train_data_folder elif self.mode == 'eval': self.data = eval_data_folder def __getitem__(self, index): """ 步骤三:实现__getitem__方法,定义指定index时如何获取数据,并返回单条数据(训练数据,对应的标签) """ data = np.array(self.data[index][0]).astype('float32') data = self.transforms(data) label = np.array([self.data[index][1]]).astype('int64') return data, label def __len__(self): """ 步骤四:实现__len__方法,返回数据集总数目 """ return len(self.data)登录后复制 In [ ]from paddle.vision import transforms as T#数据增强transform_train =T.Compose([T.Resize((256,256)), #T.RandomVerticalFlip(10), #T.RandomHorizontalFlip(10), T.RandomRotation(10), T.Transpose(), T.Normalize(mean=[0, 0, 0], # 像素值归一化 std =[255, 255, 255]), # transforms.ToTensor(), # transpose操作 + (img / 255),并且数据结构变为PaddleTensor T.Normalize(mean=[0.50950350, 0.54632660, 0.57409690],# 减均值 除标准差 std= [0.26059777, 0.26041326, 0.29220656])# 计算过程:output[channel] = (input[channel] - mean[channel]) / std[channel] ])transform_eval =T.Compose([ T.Resize((256,256)), T.Transpose(), T.Normalize(mean=[0, 0, 0], # 像素值归一化 std =[255, 255, 255]), # transforms.ToTensor(), # transpose操作 + (img / 255),并且数据结构变为PaddleTensor T.Normalize(mean=[0.50950350, 0.54632660, 0.57409690],# 减均值 除标准差 std= [0.26059777, 0.26041326, 0.29220656])# 计算过程:output[channel] = (input[channel] - mean[channel]) / std[channel] ])登录后复制

2.3.3 实例化数据集类

根据所使用的数据集需求实例化数据集类,并查看总样本量。

In [ ]train_dataset =Dataset(mode='train',transforms=transform_train)eval_dataset =Dataset(mode='eval', transforms=transform_eval )#数据异步加载train_loader = paddle.io.DataLoader(train_dataset, places=paddle.CUDAPlace(0), batch_size=32, shuffle=True, #num_workers=2, #use_shared_memory=True )eval_loader = paddle.io.DataLoader (eval_dataset, places=paddle.CUDAPlace(0), batch_size=32, #num_workers=2, #use_shared_memory=True )print('训练集样本量: {},验证集样本量: {}'.format(len(train_loader), len(eval_loader)))登录后复制 训练集样本量: 45,验证集样本量: 12登录后复制

③ 模型选择和开发

3.1 对比网络构建

本次我们选取了经典的卷积神经网络resnet50,vgg19,mobilenet_v2来进行实验比较。

In [ ]network = paddle.vision.models.vgg19(num_classes=16)#模型封装model = paddle.Model(network)#模型可视化model.summary((-1, 3,256 , 256))登录后复制 In [ ]

network = paddle.vision.models.resnet50(num_classes=16)#模型封装model2 = paddle.Model(network)#模型可视化model2.summary((-1, 3,256 , 256))登录后复制

3.2 对比网络训练

In [ ]#优化器选择class SaveBestModel(paddle.callbacks.Callback): def __init__(self, target=0.5, path='work/best_model', verbose=0): self.target = target self.epoch = None self.path = path def on_epoch_end(self, epoch, logs=None): self.epoch = epoch def on_eval_end(self, logs=None): if logs.get('acc') > self.target: self.target = logs.get('acc') self.model.save(self.path) print('best acc is {} at epoch {}'.format(self.target, self.epoch))callback_visualdl = paddle.callbacks.VisualDL(log_dir='work/vgg19')callback_savebestmodel = SaveBestModel(target=0.5, path='work/best_model')callbacks = [callback_visualdl, callback_savebestmodel]base_lr = config_parameters['lr']epochs = config_parameters['epochs']def make_optimizer(parameters=None): momentum = 0.9 learning_rate= paddle.optimizer.lr.CosineAnnealingDecay(learning_rate=base_lr, T_max=epochs, verbose=False) weight_decay=paddle.regularizer.L2Decay(0.0001) optimizer = paddle.optimizer.Momentum( learning_rate=learning_rate, momentum=momentum, weight_decay=weight_decay, parameters=parameters) return optimizeroptimizer = make_optimizer(model.parameters())model.prepare(optimizer, paddle.nn.CrossEntropyLoss(), paddle.metric.Accuracy())model.fit(train_loader, eval_loader, epochs=100, batch_size=1, # 是否打乱样本集 callbacks=callbacks, verbose=1) # 日志展示格式登录后复制 3.3 StripPooling注意力机制

3.3.1 SP模块的介绍

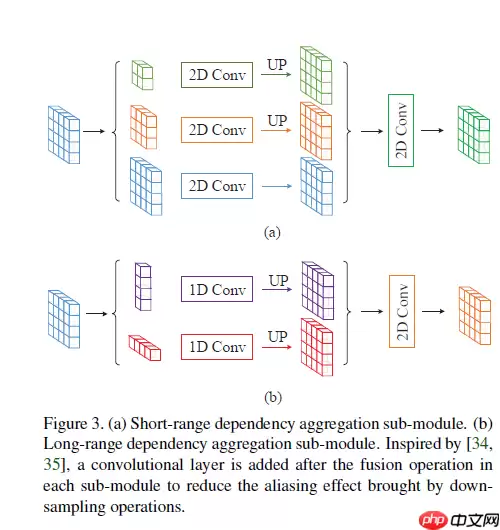

这两种依赖关系对于网络的预测来说都至关重要。因此,MPM分别使用这两个分支生成对应的特征图,然后将两个子模块的输出拼接并用1x1卷积得到最终的输出特征。

In [4]import paddlefrom paddle.fluid.layers.nn import transposeimport paddle.nn as nnimport mathimport paddle.nn.functional as Fclass SPBlock(nn.Layer): def __init__(self, inplanes, outplanes, norm_layer=None): super(SPBlock, self).__init__() midplanes = outplanes self.conv1 = nn.Conv2D(inplanes, midplanes, kernel_size=(3, 1), padding=(1, 0), bias_attr=False) self.bn1 = nn.BatchNorm(midplanes) self.conv2 = nn.Conv2D(inplanes, midplanes, kernel_size=(1, 3), padding=(0, 1), bias_attr=False) self.bn2 = nn.BatchNorm(midplanes) self.conv3 = nn.Conv2D(midplanes, outplanes, kernel_size=1, bias_attr=True) self.pool1 = nn.AdaptiveAvgPool2D((None, 1)) self.pool2 = nn.AdaptiveAvgPool2D((1, None)) self.relu = nn.ReLU() self.sigmoid = nn.Sigmoid() def forward(self, x): identity = x _, _, h, w = x.shape x1 = self.pool1(x) x1 = self.conv1(x1) x1 = self.bn1(x1) x1 = paddle.expand(x1,[-1, -1, h, w]) #x1 = F.interpolate(x1, (h, w)) x2 = self.pool2(x) x2 = self.conv2(x2) x2 = self.bn2(x2) x2 = paddle.expand(x2,[-1, -1, h, w]) #x2 = F.interpolate(x2, (h, w)) x = self.relu(x1 + x2) x = x * self.sigmoid(self.conv3(x)) return xif __name__ == '__main__': x = paddle.randn(shape=[1, 16, 64, 128]) # b, c, h, w sp_model = SPBlock(16,16) y = sp_model(x) print(y.shape)登录后复制

[1, 16, 64, 128]登录后复制

3.3.2 注意力多尺度特征融合卷积神经网络的搭建

In [ ]import paddle.nn.functional as F# 构建模型(Inception层)class Inception(paddle.nn.Layer): def __init__(self, in_channels, c1, c2, c3, c4): super(Inception, self).__init__() # 路线1,卷积核1x1 self.route1x1_1 = paddle.nn.Conv2D(in_channels, c1, kernel_size=1) # 路线2,卷积层1x1、卷积层3x3 self.route1x1_2 = paddle.nn.Conv2D(in_channels, c2[0], kernel_size=1) self.route3x3_2 = paddle.nn.Conv2D(c2[0], c2[1], kernel_size=3, padding=1) # 路线3,卷积层1x1、卷积层5x5 self.route1x1_3 = paddle.nn.Conv2D(in_channels, c3[0], kernel_size=1) self.route5x5_3 = paddle.nn.Conv2D(c3[0], c3[1], kernel_size=5, padding=2) # 路线4,池化层3x3、卷积层1x1 self.route3x3_4 = paddle.nn.MaxPool2D(kernel_size=3, stride=1, padding=1) self.route1x1_4 = paddle.nn.Conv2D(in_channels, c4, kernel_size=1) def forward(self, x): route1 = F.relu(self.route1x1_1(x)) route2 = F.relu(self.route3x3_2(F.relu(self.route1x1_2(x)))) route3 = F.relu(self.route5x5_3(F.relu(self.route1x1_3(x)))) route4 = F.relu(self.route1x1_4(self.route3x3_4(x))) out = [route1, route2, route3, route4] return paddle.concat(out, axis=1) # 在通道维度(axis=1)上进行连接# 构建 BasicConv2d 层def BasicConv2d(in_channels, out_channels, kernel, stride=1, padding=0): layer = paddle.nn.Sequential( paddle.nn.Conv2D(in_channels, out_channels, kernel, stride, padding), paddle.nn.BatchNorm2D(out_channels, epsilon=1e-3), paddle.nn.ReLU()) return layer# 搭建网络class TowerNet(paddle.nn.Layer): def __init__(self, in_channel, num_classes): super(TowerNet, self).__init__() self.b1 = paddle.nn.Sequential( BasicConv2d(in_channel, out_channels=64, kernel=3, stride=2, padding=1), paddle.nn.MaxPool2D(2, 2)) self.b2 = paddle.nn.Sequential( BasicConv2d(64, 128, kernel=3, padding=1), paddle.nn.MaxPool2D(2, 2)) self.b3 = paddle.nn.Sequential( BasicConv2d(128, 256, kernel=3, padding=1), paddle.nn.MaxPool2D(2, 2), SPBlock(256,256)) self.b4 = paddle.nn.Sequential( BasicConv2d(256, 256, kernel=3, padding=1), paddle.nn.MaxPool2D(2, 2), SPBlock(256,256)) self.b5 = paddle.nn.Sequential( Inception(256, 64, (64, 128), (16, 32), 32), paddle.nn.MaxPool2D(2, 2), SPBlock(256,256), Inception(256, 64, (64, 128), (16, 32), 32), paddle.nn.MaxPool2D(2, 2), SPBlock(256,256), Inception(256, 64, (64, 128), (16, 32), 32)) self.AvgPool2D=paddle.nn.AvgPool2D(2) self.flatten=paddle.nn.Flatten() self.b6 = paddle.nn.Linear(256, num_classes) def forward(self, x): x = self.b1(x) x = self.b2(x) x = self.b3(x) x = self.b4(x) x = self.b5(x) x = self.AvgPool2D(x) x = self.flatten(x) x = self.b6(x) return x登录后复制 In [ ]

model = paddle.Model(TowerNet(3, config_parameters['class_dim']))model.summary((-1, 3, 256, 256))登录后复制

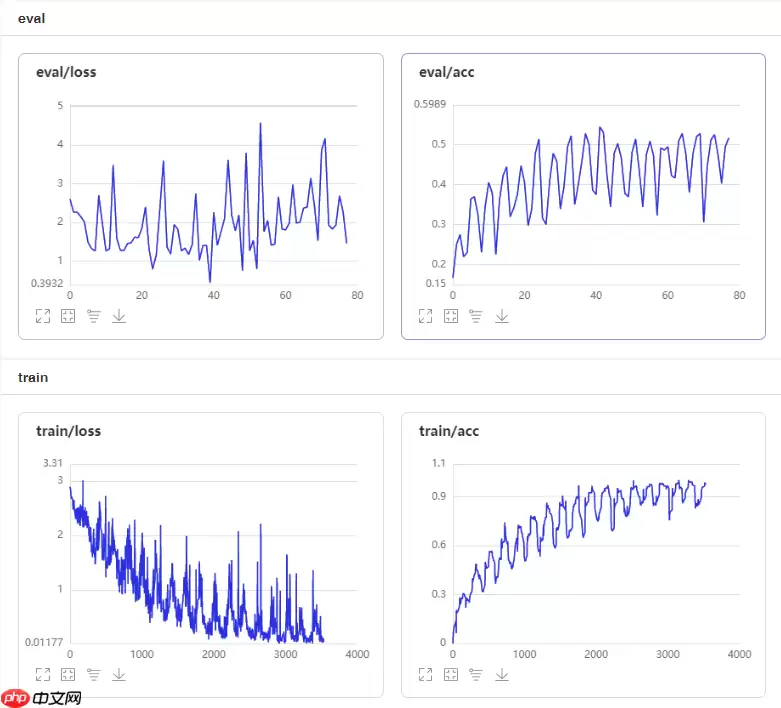

④改进模型的训练和优化器的选择

In [ ]#优化器选择class SaveBestModel(paddle.callbacks.Callback): def __init__(self, target=0.5, path='work/best_model', verbose=0): self.target = target self.epoch = None self.path = path def on_epoch_end(self, epoch, logs=None): self.epoch = epoch def on_eval_end(self, logs=None): if logs.get('acc') > self.target: self.target = logs.get('acc') self.model.save(self.path) print('best acc is {} at epoch {}'.format(self.target, self.epoch))callback_visualdl = paddle.callbacks.VisualDL(log_dir='work/CA_Inception_Net')callback_savebestmodel = SaveBestModel(target=0.5, path='work/best_model')callbacks = [callback_visualdl, callback_savebestmodel]base_lr = config_parameters['lr']epochs = config_parameters['epochs']def make_optimizer(parameters=None): momentum = 0.9 learning_rate= paddle.optimizer.lr.CosineAnnealingDecay(learning_rate=base_lr, T_max=epochs, verbose=False) weight_decay=paddle.regularizer.L2Decay(0.0002) optimizer = paddle.optimizer.Momentum( learning_rate=learning_rate, momentum=momentum, weight_decay=weight_decay, parameters=parameters) return optimizeroptimizer = make_optimizer(model.parameters())登录后复制 In [ ]model.prepare(optimizer, paddle.nn.CrossEntropyLoss(), paddle.metric.Accuracy())登录后复制 In [24]

model.fit(train_loader, eval_loader, epochs=100, batch_size=1, # 是否打乱样本集 callbacks=callbacks, verbose=1) # 日志展示格式登录后复制

⑤项目总结

1.本文设计一个strip pooling模块(SPM),以有效地扩大骨干网络的感受野范围。 更具体地说,SPM由两个途径组成,它们专注于沿水平或垂直空间维度对远程上下文进行编码。对于池化特征图中的每个空间位置,它会对其全局的水平和垂直信息进行编码,然后使用这些编码来平衡其自身的权重以进行特征优化。2.此外,提出了一种新颖的附加残差构建模块,称为混合池模块(MPM),以进一步在高语义级别上对远程依赖性进行建模。 它通过利用具有不同内核形状的池操作来收集内容丰富的上下文信息,以探查具有复杂场景的图像。3.利用SP注意力机制改进模型设计,在相关评测数据集上取得了SOTA的效果。

相关攻略

Daniel Miessler 曾一针见血地指出一个普遍困境:“许多公司并非不愿采用AI,而是根本不知从何用起。人们对AI效果未达预期的多数失望,根源往往在于无法精准描述自身的真实需求。” 这一洞察揭示了AI应用的核心前提:AI本质是高效执行者,它依赖明确、清晰的指令。意图模糊,再先进的模型也无能为

如今的人工智能技术,已经能够在毫秒级别识别厨房照片中的物体,精准分割街景中的每个元素,甚至生成现实中从未存在过的逼真室内图像。然而,当你要求它走进一个真实的房间,回答“哪个物品放在哪个架子上”、“桌子距离墙壁有多远”或“天花板与窗户的边界在何处”这类涉及空间关系的问题时,它的局限性便暴露无遗。 当前

AI时代,真正决定企业成败的,不只是技术能力,更是CEO与CIO的协同方式。CEO必须亲自“站台”,统一战略与外部叙事,但不能事必躬亲;CIO则成为关键执行者与“现实校准器”,既要看懂技术,更要转化商业价值。 回顾过去五十年技术驱动的商业变革,从互联网的爆炸式增长到开源技术的兴起,每一次浪潮都留下了

最近,社交平台上的一则吐槽引发了广泛关注。一位网友在使用一款名为“飞鸭AI记账”的应用时,遭遇了令人极度不适的对话。本是一次普通的消费记录,却演变成了一场由AI主导的“冒犯秀”。 根据网友晒出的截图,事情经过是这样的:用户先告知AI“给爸爸买衣服159元”。没想到,AI的回复直接越过了底线:“159

继ClawdBot事件(这款自托管AI助手因日均曝出2 6个CVE高危漏洞而引发业界震动)之后,我们决定对当前AI基础设施的真实安全状况进行一次深度剖析。 软件行业过去数十年在安全交付产品方面积累的经验与规范,如今正面临前所未有的冲击。企业正竞相构建自有的大语言模型基础设施,这背后既有对AI作为核心

热门专题

热门推荐

2026年5月6日,存储行业迎来一个标志性节点:美光正式向市场交付其6600 ION系列固态硬盘的245TB版本。这不仅刷新了商用SSD的容量纪录,更意味着数据中心存储的密度与能效竞赛,进入了新的阶段。 这款“巨无霸”SSD的核心,是美光自研的第九代(G9)276层3D QLC NAND闪存颗粒。为

2026年5月5日,小米汽车旗下备受期待的首款增程式全尺寸SUV——内部代号“昆仑”的路试谍照正式曝光。作为一款瞄准多人口家庭用户市场的战略车型,“昆仑”采用了当前市场热门的增程式混合动力技术路线,旨在为用户提供无里程焦虑的纯电出行体验。 据悉,这款全新SUV计划于2026年下半年正式上市发布,其亮

备受期待的荣耀600系列手机国行版本,即将在本月下旬正式登陆国内市场。根据最新备案信息,该系列将提供六款独具特色的配色供消费者选择,分别为:象征喜悦的“好事橙”、寓意美好的“幸运星”、清新淡雅的“茉莉白”、活力十足的“青苹果”、深邃迷人的“光羽蓝”,以及永不过时的经典“曜石黑”。 从硬件配置来看,荣

近日,游戏界传来一则颇具讨论价值的消息。由前《巫师3》总监Konrad Tomaszkiewicz领衔的工作室Rebel Wolves,正式公布了其正在开发的黑暗奇幻角色扮演游戏《黎明行者之血》的一项激进设计:玩家在完成序章后,几乎可以跳过所有支线任务与地图探索,直接挑战位于城堡中的最终BOSS。

在王者荣耀的对抗路中,老夫子凭借其独特的机制,始终是令对手头疼的强势英雄。想要真正掌握这位“单挑王”,一套精准的攻速铭文搭配与灵活的出装思路,是奠定你线上压制力与团战影响力的关键。正确的配置,能让你从对线期开始就掌握主动权。 攻速铭文搭配:构筑前期优势的核心 铭文是英雄前期作战能力的基石。对于依赖普