《人工智能导论:案例与实践》基于前馈神经网络实现波士顿房价预测

本文围绕波士顿房价预测实验展开,介绍实验目的为掌握前馈神经网络相关知识及飞桨框架使用。实验使用波士顿房价数据集,经数据准备、模型构建、训练配置等步骤,构建前馈神经网络,用MSE损失函数和Adam优化器训练,通过R²系数评估,最终实现房价预测。

波士顿房价预测实验

1. 实验介绍

1.1 实验目的

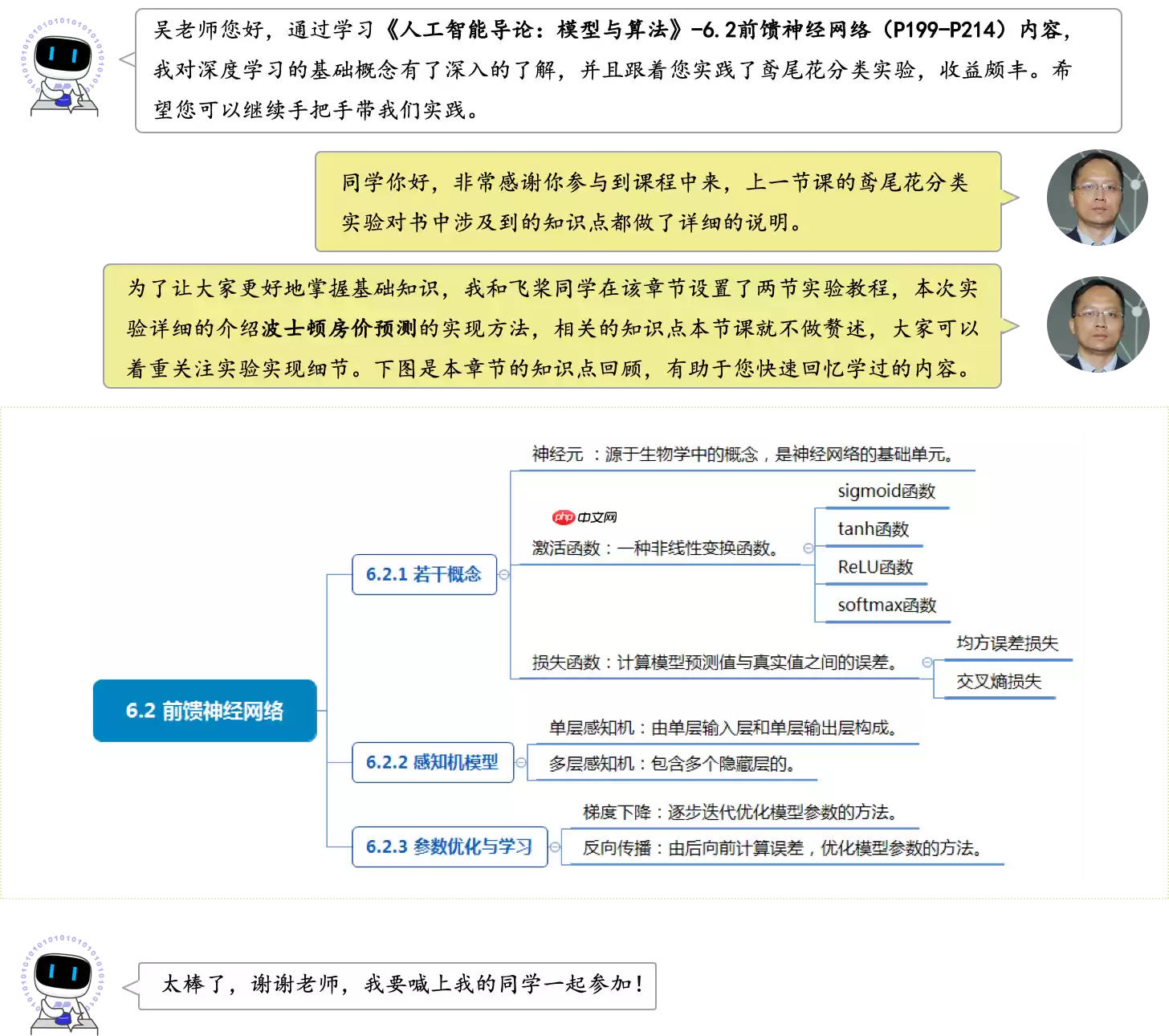

理解并掌握前馈神经网络的基础知识,包括:神经元、激活函数、损失函数、单层感知机、多层感知机;掌握前馈神经网络的架构的设计原理以及构建流程;熟悉飞桨深度学习开源框架构建前馈神经网络的方法。1.2 实验内容

在人类的生活中经常遇到分类与预测的问题,目标变量可能受多个因素影响,根据相关系数可以判断影响因子的重要性。房价的高低也是受多个因素影响的,如房子所处的城市是一线还是二线,房子周边交通方便程度如通不通地铁,房子周边学校和医院等,这些都影响了房子的价格。

免费影视、动漫、音乐、游戏、小说资源长期稳定更新! 👉 点此立即查看 👈

本节实验将分析波士顿房价预测任务,这是学习人工智能课程的入门案例,本节结合前馈神经网络的理论知识,通过“波士顿房价预测”实验带领大家学习如何使用深度学习技术对回归任务进行建模。

1.3 实验环境

本实验支持在实训平台或本地环境操作,建议使用实训平台。

实训平台:如果选择在实训平台上操作,无需安装实验环境。实训平台集成了实验必须的相关环境,代码可在线运行,同时还提供了免费算力,即使实践复杂模型也无算力之忧。本地环境:如果选择在本地环境上操作,需要安装 Python3.7、飞桨开源框架V2.0及以上等实验必须的环境,具体要求及实现代码请参见《本地环境安装说明》。1.4 实验设计

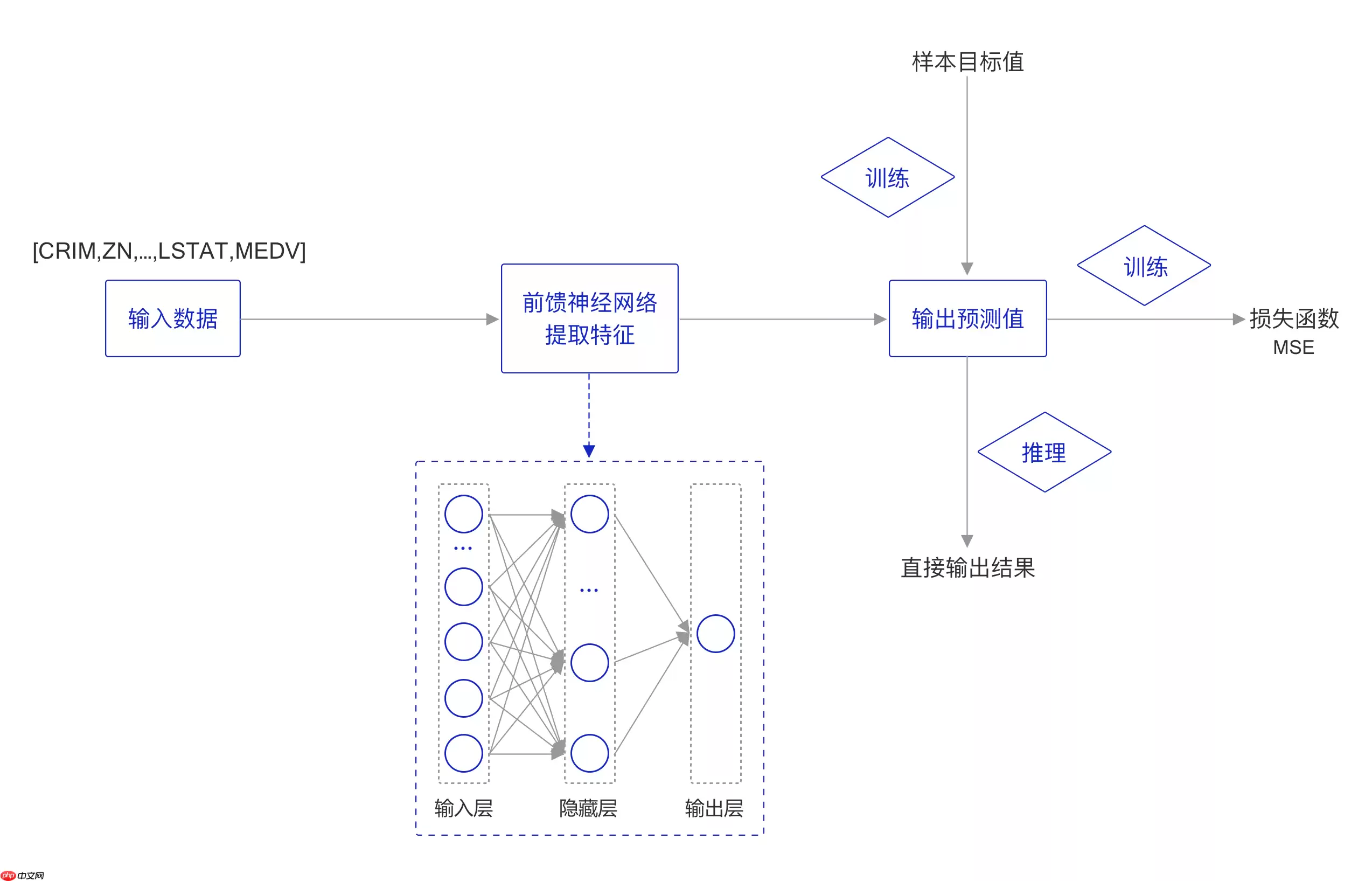

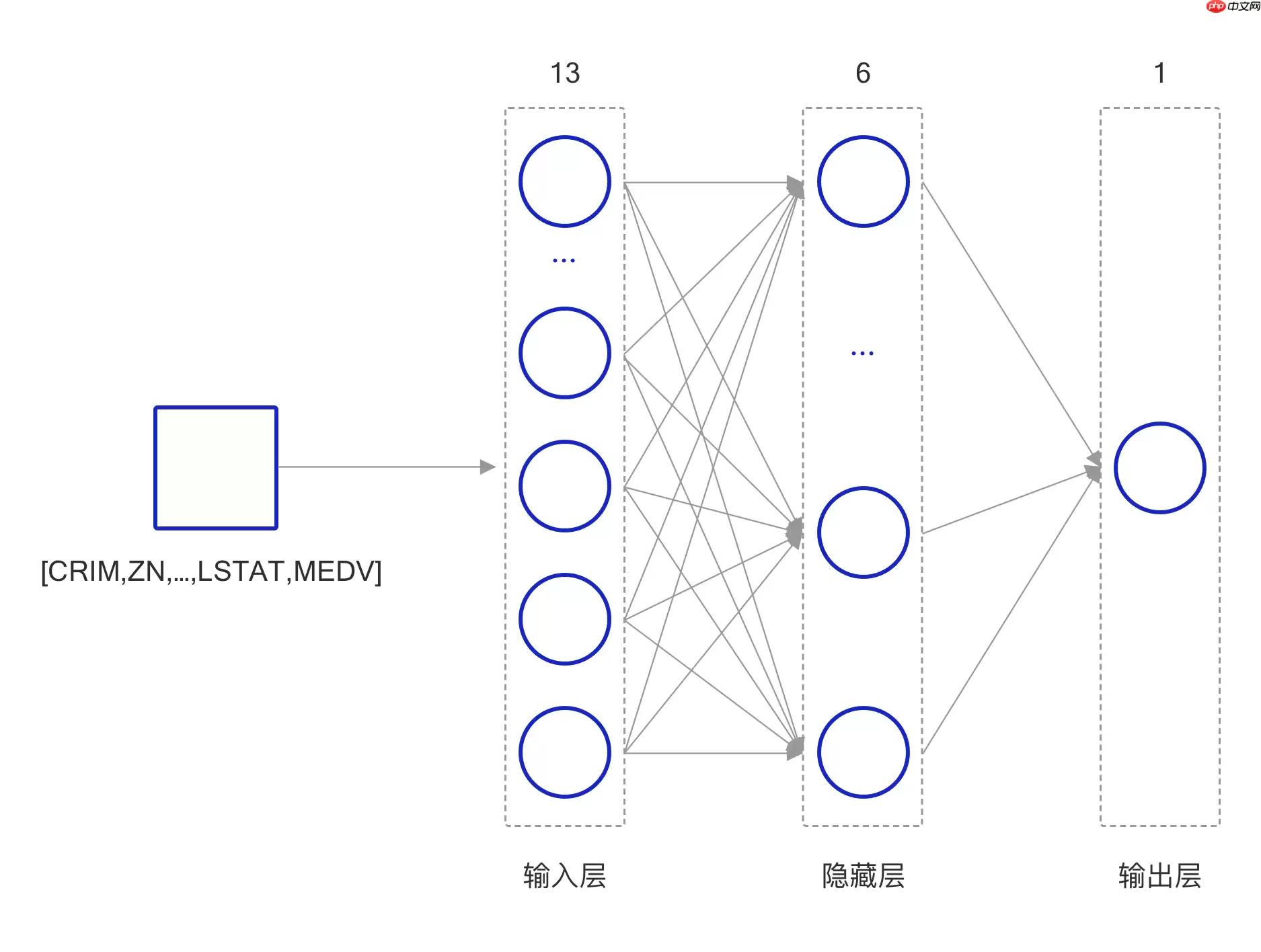

本实验的实现方案和鸢尾花分类实验的实现方案类似,构建一个简单的前馈神经网络模型,其结构如 图2 所示,对于一条输入数据,首先使用前馈神经网络提取特征,获取特征表示之后使用MSE损失函数计算真实值和预测值之间的误差,进行模型训练;在推理阶段,可直接使用模型的输出值作为最终的预测结果。

图2 实验方案

2. 实验详细实现

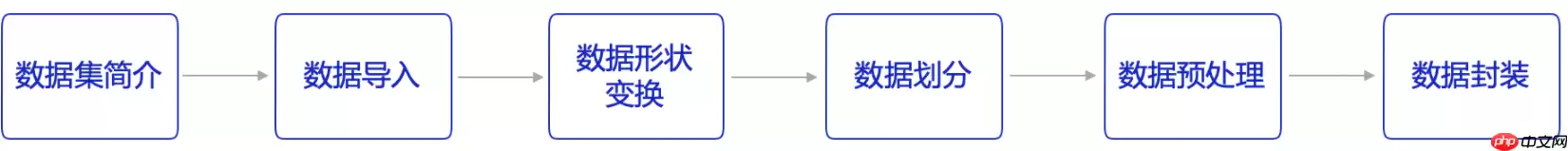

本次实验流程如 图3 所示,主要分为以下7个步骤:

数据准备:根据网络接收的数据格式,完成相应的数据预处理操作,保证模型正常读取;模型构建:构建前馈网络结构;训练配置:实例化模型,指定模型采用的寻解算法(优化器);模型训练:执行多轮训练不断调整参数,以达到较好的效果;模型保存:保存模型参数;模型评估:对训练好的模型进行评估测试,观察R2系数;模型推理:使用一条房价数据验证模型回归效果;

图3 实验流程

2.1 数据准备

本实验使用的训练数据集是经典学术数据集“波士顿房价”数据,使用飞桨核心框架构建自定义的前馈神经网络,实现房价预测的回归任务。

数据准备工作包含6个部分,如 图4 所示:

图4 数据准备流程

说明:

本教程中的代码都可以在AI Studio上直接运行,输出结果都是基于程序真实运行的结果。由于是真实案例,代码之间存在依赖关系,因此需要读者逐条、全部运行,否则会导致命令执行报错。2.1.1 数据集介绍

波士顿房价预测数据集来自 UCI 机器学习知识库(数据集已下线)。于1978年开始统计,数据集中的每一行数据都是对波士顿周边或城镇房价的情况描述,总共有 506 行,即 506 个样本,该数据集统计了 14 种可能影响房价的因素和该类型房屋的均价,期望构建一个基于14个因素进行房价预测的模型,如 图5 所示。

图5 波士顿房价影响因素示意图

2.1.2 数据导入

通过如下代码导入数据,了解下波士顿房价的数据集结构,数据存放在本地目录./work/datasets/housing.data文件中。

In [ ]# coding=utf-8# 导入环境import osimport paddleimport randomimport numpy as npfrom sklearn.metrics import r2_score登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):登录后复制 In [ ]

# 读入训练数据datafile = './work/datasets/housing.data'data = np.fromfile(datafile, sep=' ')print(data.shape)登录后复制

(7084,)登录后复制

2.1.3 数据形状变换

由上一节可以看出读入的原始数据是1维的,即所有数据都连在一起。因此需要将数据的形状进行变换,形成一个2维的矩阵,每行为一个数据样本(14个值),每个数据样本包含13个X(影响房价的特征)和一个Y(该类型房屋的均价)。

In [ ]# 读入之后的数据被转化成1维array,其中array的第0-13项是第一条数据,第14-27项是第二条数据,以此类推.... # 这里对原始数据做reshape,变成N x 14的形式feature_names = [ 'CRIM', 'ZN', 'INDUS', 'CHAS', 'NOX', 'RM', 'AGE','DIS', 'RAD', 'TAX', 'PTRATIO', 'B', 'LSTAT', 'MEDV' ]feature_num = len(feature_names)data = data.reshape([data.shape[0] // feature_num, feature_num])# 查看数据x = data[0]print(x.shape)print(x)登录后复制

(14,)[6.320e-03 1.800e+01 2.310e+00 0.000e+00 5.380e-01 6.575e+00 6.520e+01 4.090e+00 1.000e+00 2.960e+02 1.530e+01 3.969e+02 4.980e+00 2.400e+01]登录后复制 In [ ]

# 在这里我们简单地看一下每一项数据的分布import pandas as pdimport matplotlib.pyplot as pltdata_df = pd.DataFrame(data, columns=feature_names)data_df.hist(layout=(7, 2), bins=30, figsize=(16, 30))# 可以看到部分数据分布极其不均, 在这一节我们尝试用神经网络进行拟合登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/__init__.py:107: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working from collections import MutableMapping/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/rcsetup.py:20: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working from collections import Iterable, Mapping/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/colors.py:53: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working from collections import Sized/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/cbook/__init__.py:2349: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working if isinstance(obj, collections.Iterator):/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/cbook/__init__.py:2366: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working return list(data) if isinstance(data, collections.MappingView) else data登录后复制

array([[登录后复制, ], [ , ], [ , ], [ , ], [ , ], [ , ], [ , ]], dtype=object)

2.1.4 数据集划分

将数据集划分成训练集和测试集,其中训练集用于确定模型的参数,测试集用于评判模型的效果。在本案例中,将80%的数据用作训练集,20%用作测试集,实现代码如下。通过打印训练集的形状,可以发现共有404个样本,每个样本含有13个特征和1个预测值。

In [ ]ratio = 0.8offset = int(data.shape[0] * ratio)# 训练数据为80%training_data = data[:offset]training_data.shape登录后复制

(404, 14)登录后复制

2.1.5 数据归一化处理

对每个特征进行归一化处理,使得每个特征的取值缩放到0~1之间。这样做有两个好处:一是模型训练更高效;二是特征前的权重大小可以代表该变量对预测结果的贡献度(因为每个特征值本身的范围相同)。

In [ ]# 计算train数据集的最大值,最小值,平均值maximums, minimums, avgs = \ training_data.max(axis=0), \ training_data.min(axis=0), \ training_data.sum(axis=0) / training_data.shape[0] # 对数据进行归一化处理for i in range(feature_num): data[:, i] = (data[:, i] - avgs[i]) / (maximums[i] - minimums[i])登录后复制

2.1.6 数据封装

为了便于模型的调用,将上述几个数据处理操作封装成 BHPData 类,实现方法如下。

In [ ]class BHPData(object): def __init__(self, data_path = './work/datasets/housing.data', ratio=0.8): # 从文件导入数据 data = np.fromfile(data_path, sep=' ') # 每条数据包括14项,其中前面13项是影响因素,第14项是相应的房屋价格中位数 feature_num = 14 # 将原始数据进行Reshape,变成[N, 14]这样的形状 data = data.reshape([data.shape[0] // feature_num, feature_num]) # 将原数据集拆分成训练集和测试集,80%的数据做训练,20%的数据做测试 offset = int(data.shape[0] * ratio) # 计算训练集的最大值,最小值,平均值 train_data = data[:offset] maximums = train_data.max(axis=0) minimums = train_data.min(axis=0) avgs = train_data.sum(axis=0) / train_data.shape[0] # 记录数据的归一化参数,在预测时对数据做反归一化 global max_values global min_values global avg_values max_values = maximums min_values = minimums avg_values = avgs # 对数据进行归一化处理 for i in range(feature_num): data[:, i] = (data[:, i] - avgs[i]) / (maximums[i] - minimums[i]) # 训练集和测试集的划分比例 data = data.astype(np.float32) self.train_data = data[:offset] self.test_data = data[offset:] def __call__(self, *args, **kwargs): return self.train_data, self.test_data登录后复制 In [ ]

train_data, test_data = BHPData()()print(train_data.shape)print(train_data[1, :])print(test_data.shape)print(test_data[1, :])登录后复制

(404, 14)[-0.02122729 -0.14232673 -0.09655922 -0.08663366 -0.12907805 0.0168406 0.14904763 0.0721009 -0.20824365 -0.23154674 -0.02406783 0.0519112 -0.06111894 -0.05723872](102, 14)[ 0.74187917 -0.14232673 0.34131294 -0.08663366 0.3318273 -0.1245658 0.36634937 -0.2499626 0.7482781 0.65363073 0.23125131 0.01532733 0.3207795 -0.4261276 ]登录后复制

2.1.7 数据读取

接下来通过继承 paddle 的 Dataset API 来构建一个数据读取器,方便每次从数据中获取一个样本的属性和对应的标签。

In [ ]# 创建数据读取器class Generate(paddle.io.Dataset): def __init__(self, data): super(Generate, self).__init__() self.x = data[:, :-1] self.y = data[:, -1:] def __getitem__(self, idx): return self.x[idx], self.y[idx] def __len__(self): return len(self.y)登录后复制

数据预处理耗时较长,推荐使用 paddle.io.DataLoader API中的 num_workers 参数,设置进程数量,实现多进程读取数据。

class paddle.io.DataLoader(dataset, batch_size=1, shuffle=False, num_workers=0)

关键参数含义如下:

batch_size (int|None) - 每 mini-batch 中样本个数;shuffle(bool) - 生成mini-batch索引列表时是否对索引打乱顺序;num_workers (int) - 加载数据的子进程个数 。通过 paddle.io.DataLoader 构建数据迭代器的代码如下:

In [ ]train_data, test_data = BHPData()()train_generate = Generate(data=train_data)train_dataloader = paddle.io.DataLoader(train_generate, batch_size=4, shuffle=True)test_generate = Generate(data=test_data)test_dataloader = paddle.io.DataLoader(test_generate, batch_size=4)for data in train_dataloader(): x, y = data print(x, y) break登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/dataloader/dataloader_iter.py:89: DeprecationWarning: `np.bool` is a deprecated alias for the builtin `bool`. To silence this warning, use `bool` by itself. Doing this will not modify any behavior and is safe. If you specifically wanted the numpy scalar type, use `np.bool_` here.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations if isinstance(slot[0], (np.ndarray, np.bool, numbers.Number)):登录后复制登录后复制

Tensor(shape=[4, 13], dtype=float32, place=CUDAPinnedPlace, stop_gradient=True, [[-0.02128843, 0.45767328, -0.26091015, -0.08663366, -0.26899573, 0.08945987, -0.56155998, 0.18599664, -0.25172192, -0.18353005, -0.25811037, 0.04108630, -0.17452954], [-0.02072892, -0.14232673, 0.64103508, -0.08663366, 0.10137463, -0.06305976, 0.20260067, -0.17976099, -0.20824365, -0.34428161, 0.11423004, -0.00705844, 0.08043735], [-0.02042724, -0.14232673, 0.64103508, -0.08663366, 0.10137463, -0.08701071, 0.32309499, -0.19713861, -0.20824365, -0.34428161, 0.11423004, -0.00181465, 0.17177288], [-0.02041027, 0.19767326, -0.13546355, -0.08663366, -0.20315212, 0.12433246, -0.48123044, 0.11980525, 0.00914765, -0.04991835, -0.20491889, 0.03207066, -0.17922048]]) Tensor(shape=[4, 1], dtype=float32, place=CUDAPinnedPlace, stop_gradient=True, [[ 0.15387239], [-0.08612762], [-0.11946095], [ 0.19831683]])登录后复制

至此,完成了数据准备的相关工作,通过 paddle.io.Dataset 可以返回数据和标签信息,接下来将处理好的数据输入到神经网络,应用到具体算法上。

2.2 模型构建

数据集准备完成,接下来需要设计训练模型,本实验构建的是简单的前馈神经网络,结构如 图7 所示:

图7 网络结构

这里构建一个简单的前馈神经网络,该网络由输入层、隐藏层以及输出层构成。

由于波士顿数据集中的波士顿房价由13种因素影响,因此输入层由13个神经元构成。隐藏层由 6 个神经元组成,其中每个神经元只接收来自输入层的 13 个属性信息,经过 sigmoid 激活函数之后将信息传递给输出层,最后输出层输出预测结果。

说明:

神经元模型是神经网络中最基本的组成成分,这一概念来源于生物神经网络中,通过电位变化表示“兴奋”的生物神经元。在深度学习领域,一个神经元其实是一个计算单元。每层神经元只和相邻神经元相连,即每层神经元只接收相邻前序神经网络中神经元所传来的信息,只给相邻的后续神经层中的神经元传递信息。在前馈神经网络中,同一层的神经元之间没有任何连接。

神经网络中使用非线性函数作为激活函数,通过对多个非线性函数进行组合,实现对输入信息的非线性变换。

这里使用飞桨构建网络,在飞桨中可以使用 Layer 子类定义的方式来进行代码编写,方便复用,子类中包含两个函数:

__init__():声明 Layer 组网 ;forward():使用声明的 Layer 变量进行前向计算;定义的网络结构如下:

In [ ]# 定义多层前馈神经网络class SingleFC(paddle.nn.Layer): def __init__(self): super(SingleFC, self).__init__() # 构建第一个全连接层,输入单元13个,输出单元6个 self.fc1 = paddle.nn.Linear( in_features=13, out_features=6, weight_attr=paddle.ParamAttr(initializer=paddle.nn.initializer.Normal(mean=0.0, std=0.01)), bias_attr=paddle.ParamAttr(initializer=paddle.nn.initializer.Constant(value=1.0)) ) # 构建第二全连接层,输入单元6个,输出单元1个 self.fc2 = paddle.nn.Linear( in_features=6, out_features=1, weight_attr=paddle.ParamAttr(initializer=paddle.nn.initializer.Normal(mean=0.0, std=0.01)), bias_attr=paddle.ParamAttr(initializer=paddle.nn.initializer.Constant(value=1.0)) ) # 定义网络使用的激活函数 self.act = paddle.nn.Sigmoid() def forward(self, inputs): outputs = self.fc1(inputs) outputs = self.act(outputs) outputs = self.fc2(outputs) return outputs登录后复制

在定义上述网络结构的过程中,使用了飞桨中的如下 API:

class paddle.nn.Linear(in_features, out_features, weight_attr=None, bias_attr=None, name=None)

关键参数含义如下:

in_features (int) – 线性变换层输入单元的数目;out_features (int) – 线性变换层输出单元的数目;class paddle.nn.Sigmoid(name=None)

关键参数含义如下:

name (str,可选)- 操作的名称(可选,默认值为None);说明:

熟练掌握飞桨API的使用方法,是使用飞桨完成各类深度学习任务的基础,也是开发者必须掌握的技能。登录“飞桨正式->文档->API文档”,可以获取飞桨API文档。

2.3 训练配置

In [ ]lr = 0.003 # 学习率大小batch_size = 32 # 批次大小## 1. 加载数据# 获取波士顿房价预测任务的训练集和测试集train_data, test_data = BHPData()()# 构建训练数据迭代器train_generate = Generate(data=train_data)train_dataloader = paddle.io.DataLoader(train_generate, batch_size=batch_size, shuffle=True)# 构建测试数据迭代器test_generate = Generate(data=test_data)test_dataloader = paddle.io.DataLoader(test_generate, batch_size=batch_size)## 2. 定义网络model = SingleFC()## 3. 定义优化器# 定义优化器,这里使用 Adam 优化器# opt = paddle.optimizer.Adam(learning_rate=lr, parameters=model.parameters(), weight_decay=paddle.regularizer.L2Decay(coeff=1e-5))opt = paddle.optimizer.Adam(learning_rate=lr, parameters=model.parameters())## 4. 定义损失函数# 定义损失函数,这里使用均方差损失函数loss_fn = paddle.nn.MSELoss()登录后复制

W0505 14:12:54.200083 4059 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0505 14:12:54.205361 4059 device_context.cc:372] device: 0, cuDNN Version: 7.6.登录后复制

说明:

均方误差损失函数通过计算预测值和实际值之间距离(即误差)的平方来衡量模型优劣。假设有 n 个训练数据 xi,每个训练数据 xi 的真实输出为 yi,模型对 xi 的预测值为 y^i。该模型在 n 个训练数据下所产生的均方误差损失可定义如下:

MSE=n1i=1∑n(yi−y^i)2

2.4 模型训练

本次实验总共训练 10 个epoch,每个 epoch 都需要在训练集与测试集上运行,并打印出训练集和验证集上的 loss ,并且每一轮训练完成之后都对模型进行保存。

In [ ]class Trainer(object): def __init__(self, model, optimizer, loss_fn): # 模型实例 self.model = model # 优化器 self.optimizer = optimizer # 损失函数 self.loss_fn = loss_fn def save(self, epoch): model_path = os.path.join('bhp_{}.pdparams'.format(epoch)) opt_path = os.path.join('bhp_{}.pdopt'.format(epoch)) paddle.save(self.model.state_dict(), model_path) paddle.save(self.optimizer.state_dict(), opt_path) def train_step(self, data): x, y = data # 获取模型预测 pred = self.model(x) # 计算损失 loss = self.loss_fn(pred, y) # 计算损失均值 avg_loss = paddle.mean(loss) avg_loss.backward() self.optimizer.step() self.optimizer.clear_grad() return avg_loss def train_epoch(self, dataloader, epoch): self.model.train() # 将模型设置为训练状态 for i, data in enumerate(dataloader()): # 训练每一个批次 loss = self.train_step(data) def val_epoch(self, dataloader): # 将模型设置为评估状态 self.model.eval() error_list = list() for i, data in enumerate(dataloader()): x, y = data # 获取模型预测 pred = self.model(x) # 计算损失 res = self.loss_fn(pred, y) res = paddle.cast(res, dtype='float32') # 追加 error_list.extend(res.numpy()) # 计算平均误差 error = np.array(error_list).mean() return error def train(self, epochs, train_dataloader, test_dataloader): for i in range(epochs): # 训练每个 epoch self.train_epoch(train_dataloader, i) # 计算训练集平均误差 train_error = self.val_epoch(train_dataloader) # 计算测试集平均误差 test_error = self.val_epoch(test_dataloader) print('epoch is {}, train error is {}, test error is {}'.format(i, train_error, test_error)) # 保存模型 self.save(i)登录后复制 In [ ]epoch = 150 # 模型训练的轮数设置为40# 实例化训练器trainer = Trainer(model=model, optimizer=opt, loss_fn=loss_fn)# 启动训练trainer.train(epoch, train_dataloader, test_dataloader)登录后复制

epoch is 0, train error is 0.599963366985321, test error is 0.8426924347877502epoch is 1, train error is 0.33809980750083923, test error is 0.5098757743835449epoch is 2, train error is 0.17354761064052582, test error is 0.2805306315422058epoch is 3, train error is 0.0864136666059494, test error is 0.14261744916439056epoch is 4, train error is 0.04885997623205185, test error is 0.07059898972511292epoch is 5, train error is 0.03657462075352669, test error is 0.03762945532798767epoch is 6, train error is 0.032262854278087616, test error is 0.024152085185050964epoch is 7, train error is 0.03038971871137619, test error is 0.018659504130482674epoch is 8, train error is 0.028899066150188446, test error is 0.01620791107416153epoch is 9, train error is 0.02862655743956566, test error is 0.015054404735565186epoch is 10, train error is 0.02913896180689335, test error is 0.013866808265447617epoch is 11, train error is 0.026866454631090164, test error is 0.013537761755287647epoch is 12, train error is 0.025524267926812172, test error is 0.012757524847984314epoch is 13, train error is 0.024874139577150345, test error is 0.012315462343394756epoch is 14, train error is 0.025137420743703842, test error is 0.011885158717632294epoch is 15, train error is 0.02358795888721943, test error is 0.01119577419012785epoch is 16, train error is 0.022790081799030304, test error is 0.010653355158865452epoch is 17, train error is 0.022963110357522964, test error is 0.010220974683761597epoch is 18, train error is 0.02160964533686638, test error is 0.01010782178491354epoch is 19, train error is 0.021636109799146652, test error is 0.009703177027404308epoch is 20, train error is 0.02079329825937748, test error is 0.009529443457722664epoch is 21, train error is 0.020248423178195953, test error is 0.00952425692230463epoch is 22, train error is 0.0202083270996809, test error is 0.009253574535250664epoch is 23, train error is 0.01902635209262371, test error is 0.008858736604452133epoch is 24, train error is 0.019779609516263008, test error is 0.008941514417529106epoch is 25, train error is 0.01850392110645771, test error is 0.008752807043492794epoch is 26, train error is 0.018656877800822258, test error is 0.008564372546970844epoch is 27, train error is 0.017408136278390884, test error is 0.008398843929171562epoch is 28, train error is 0.017972571775317192, test error is 0.008234277367591858epoch is 29, train error is 0.01699184440076351, test error is 0.00824739970266819epoch is 30, train error is 0.016450442373752594, test error is 0.008105671033263206epoch is 31, train error is 0.01594175398349762, test error is 0.007849451154470444epoch is 32, train error is 0.015696382150053978, test error is 0.007852842099964619epoch is 33, train error is 0.015313193202018738, test error is 0.0077778794802725315epoch is 34, train error is 0.015577143989503384, test error is 0.007714115083217621epoch is 35, train error is 0.014684191904962063, test error is 0.007547477260231972epoch is 36, train error is 0.01464575994759798, test error is 0.007467261981219053epoch is 37, train error is 0.014231939800083637, test error is 0.007276446558535099epoch is 38, train error is 0.014693371020257473, test error is 0.0074542369693517685epoch is 39, train error is 0.01369321160018444, test error is 0.007212171331048012epoch is 40, train error is 0.013488874770700932, test error is 0.007128127384930849epoch is 41, train error is 0.013302985578775406, test error is 0.007069620303809643epoch is 42, train error is 0.01309875212609768, test error is 0.006914699450135231epoch is 43, train error is 0.013648565858602524, test error is 0.006964618340134621epoch is 44, train error is 0.012945810332894325, test error is 0.00689729955047369epoch is 45, train error is 0.012512827292084694, test error is 0.006765847560018301epoch is 46, train error is 0.012344107031822205, test error is 0.006752694025635719epoch is 47, train error is 0.012566853314638138, test error is 0.006674342788755894epoch is 48, train error is 0.01238404680043459, test error is 0.006759702693670988epoch is 49, train error is 0.011963210068643093, test error is 0.006513678468763828epoch is 50, train error is 0.011829029768705368, test error is 0.006576716899871826epoch is 51, train error is 0.012480816803872585, test error is 0.006711541675031185epoch is 52, train error is 0.011524318717420101, test error is 0.0065663764253258705epoch is 53, train error is 0.011767768301069736, test error is 0.006488143466413021epoch is 54, train error is 0.011314027942717075, test error is 0.0063834888860583305epoch is 55, train error is 0.011330046691000462, test error is 0.0064504509791731834epoch is 56, train error is 0.011437342502176762, test error is 0.00652934517711401epoch is 57, train error is 0.01096153911203146, test error is 0.006485152058303356epoch is 58, train error is 0.010843802243471146, test error is 0.006537457928061485epoch is 59, train error is 0.010733425617218018, test error is 0.006397902965545654epoch is 60, train error is 0.010750968009233475, test error is 0.006448397878557444epoch is 61, train error is 0.010654319077730179, test error is 0.006545177195221186epoch is 62, train error is 0.010641138069331646, test error is 0.006431879010051489epoch is 63, train error is 0.010702414438128471, test error is 0.006383201573044062epoch is 64, train error is 0.010693429037928581, test error is 0.0066276174038648605epoch is 65, train error is 0.01061268337070942, test error is 0.006604975555092096epoch is 66, train error is 0.01019358728080988, test error is 0.006642032414674759epoch is 67, train error is 0.010240346193313599, test error is 0.006903493776917458epoch is 68, train error is 0.01010087039321661, test error is 0.006634954363107681epoch is 69, train error is 0.010075286962091923, test error is 0.00654292106628418epoch is 70, train error is 0.010370890609920025, test error is 0.006924394518136978epoch is 71, train error is 0.010067077353596687, test error is 0.00691407173871994epoch is 72, train error is 0.009950746782124043, test error is 0.006728621199727058epoch is 73, train error is 0.010253815911710262, test error is 0.0069692423567175865epoch is 74, train error is 0.009924779646098614, test error is 0.00686108972877264epoch is 75, train error is 0.009849654510617256, test error is 0.00717760156840086epoch is 76, train error is 0.00988010037690401, test error is 0.006960241124033928epoch is 77, train error is 0.009678185917437077, test error is 0.007125238887965679epoch is 78, train error is 0.009605849161744118, test error is 0.007260611280798912epoch is 79, train error is 0.009793161414563656, test error is 0.007028559222817421epoch is 80, train error is 0.00965217687189579, test error is 0.007394989486783743epoch is 81, train error is 0.009604934602975845, test error is 0.007633998990058899epoch is 82, train error is 0.00951390154659748, test error is 0.007243459112942219epoch is 83, train error is 0.009534905664622784, test error is 0.007501265034079552epoch is 84, train error is 0.009721447713673115, test error is 0.007445911876857281epoch is 85, train error is 0.009561392478644848, test error is 0.007831376977264881epoch is 86, train error is 0.010300512425601482, test error is 0.0075280386954545975epoch is 87, train error is 0.00958352629095316, test error is 0.00777842290699482epoch is 88, train error is 0.01072693895548582, test error is 0.00827138964086771epoch is 89, train error is 0.009930072352290154, test error is 0.00801372155547142epoch is 90, train error is 0.009442511945962906, test error is 0.007989486679434776epoch is 91, train error is 0.009330598637461662, test error is 0.008058872073888779epoch is 92, train error is 0.009473074227571487, test error is 0.008141078986227512epoch is 93, train error is 0.009439191780984402, test error is 0.008543258532881737epoch is 94, train error is 0.009346096776425838, test error is 0.00818675197660923epoch is 95, train error is 0.009339812211692333, test error is 0.008142609149217606epoch is 96, train error is 0.009184868074953556, test error is 0.008209834806621075epoch is 97, train error is 0.009192024426294327, test error is 0.008473049849271774epoch is 98, train error is 0.00940775778144598, test error is 0.009201787412166595epoch is 99, train error is 0.009219285100698471, test error is 0.008753028698265553epoch is 100, train error is 0.009256680496037006, test error is 0.008449957706034184epoch is 101, train error is 0.009189369156956673, test error is 0.009115401655435562epoch is 102, train error is 0.009441361762583256, test error is 0.008994992822408676epoch is 103, train error is 0.0095262061804533, test error is 0.009081844240427017epoch is 104, train error is 0.009209765121340752, test error is 0.008994463831186295epoch is 105, train error is 0.009223194792866707, test error is 0.00882701762020588epoch is 106, train error is 0.009170174598693848, test error is 0.009324111975729465epoch is 107, train error is 0.009193419478833675, test error is 0.009253758937120438epoch is 108, train error is 0.009091896936297417, test error is 0.00976922269910574epoch is 109, train error is 0.009573767893016338, test error is 0.009716514497995377epoch is 110, train error is 0.009480045177042484, test error is 0.009336842224001884epoch is 111, train error is 0.009601003490388393, test error is 0.009362908080220242epoch is 112, train error is 0.009096958674490452, test error is 0.008994433097541332epoch is 113, train error is 0.009103701449930668, test error is 0.010191493667662144epoch is 114, train error is 0.00905767921358347, test error is 0.009461130015552044epoch is 115, train error is 0.00944032333791256, test error is 0.009980068542063236epoch is 116, train error is 0.008994565345346928, test error is 0.009975654073059559epoch is 117, train error is 0.009057198651134968, test error is 0.00977921299636364epoch is 118, train error is 0.008943511173129082, test error is 0.009862364269793034epoch is 119, train error is 0.009014508686959743, test error is 0.009694363921880722epoch is 120, train error is 0.009022625163197517, test error is 0.010179572738707066epoch is 121, train error is 0.008987467736005783, test error is 0.00953140202909708epoch is 122, train error is 0.009381490759551525, test error is 0.009843004867434502epoch is 123, train error is 0.008944964967668056, test error is 0.010047188960015774epoch is 124, train error is 0.008994393050670624, test error is 0.009673993103206158epoch is 125, train error is 0.008988423272967339, test error is 0.010246098041534424epoch is 126, train error is 0.009040430188179016, test error is 0.009910888969898224epoch is 127, train error is 0.00895601138472557, test error is 0.01015312597155571epoch is 128, train error is 0.008882192894816399, test error is 0.009992312639951706epoch is 129, train error is 0.008902640081942081, test error is 0.009837262332439423epoch is 130, train error is 0.009032582864165306, test error is 0.010566397570073605epoch is 131, train error is 0.008877186104655266, test error is 0.009863805957138538epoch is 132, train error is 0.008912201039493084, test error is 0.010056287050247192epoch is 133, train error is 0.008990713395178318, test error is 0.010298013687133789epoch is 134, train error is 0.008885025046765804, test error is 0.01002080924808979epoch is 135, train error is 0.009013487957417965, test error is 0.010105510242283344epoch is 136, train error is 0.008897285908460617, test error is 0.01005212776362896epoch is 137, train error is 0.009018302895128727, test error is 0.010412894189357758epoch is 138, train error is 0.008922673761844635, test error is 0.010210921987891197epoch is 139, train error is 0.008960590697824955, test error is 0.01030883938074112epoch is 140, train error is 0.008797199465334415, test error is 0.01027711108326912epoch is 141, train error is 0.008947267197072506, test error is 0.010475846007466316epoch is 142, train error is 0.008998454548418522, test error is 0.010314736515283585epoch is 143, train error is 0.008940584026277065, test error is 0.010578487999737263epoch is 144, train error is 0.008874653838574886, test error is 0.010532774962484837epoch is 145, train error is 0.009294978342950344, test error is 0.009907621890306473epoch is 146, train error is 0.008898764848709106, test error is 0.011040279641747475epoch is 147, train error is 0.008868013508617878, test error is 0.010186372324824333epoch is 148, train error is 0.00884439330548048, test error is 0.01059015840291977epoch is 149, train error is 0.009232071228325367, test error is 0.010928500443696976登录后复制

2.5 模型保存

在 Trainer 类中,save() 方法用于保存模型的参数和训练状态。

In [ ]def save(self, epoch):model_path = os.path.join('bhp_{}.pdparams'.format(epoch))opt_path = os.path.join('bhp_{}.pdopt'.format(epoch))paddle.save(self.model.state_dict(), model_path)paddle.save(self.optimizer.state_dict(), opt_path)登录后复制 在上面的代码中通过使用 paddle.save() API 对模型进行保存。

paddle.save(obj, path, pickle_protocol=2)

关键参数含义如下:

obj (Object) – 要保存的对象实例。path (str) – 保存对象实例的路径。如果存储到当前路径,输入的path字符串将会作为保存的文件名。2.6 模型评估

如果不能对模型的训练和测试的表现进行量化地评估,就很难衡量模型的好坏。通常我们会定义一些衡量标准,这些标准可以通过对某些误差或者拟合程度的计算来得到。在波士顿房价预测实验中将使用决定系数 R2 来量化模型的表现。模型的决定系数是回归分析中十分常用的统计信息,经常被当作衡量模型预测能力好坏的标准。

说明:

R2 的数值范围从0至1,表示目标变量的预测值和实际值之间的相关程度平方的百分比。如果一个模型的 R2 值为 0 还不如直接用平均值来预测效果好;而一个 R2 值为 1 的模型则可以对目标变量进行完美的预测。从 0 至 1 之间的数值,则表示该模型中目标变量中有百分之多少能够用特征来解释。模型也可能出现负值的 R2,这种情况下模型所做预测有时会比直接计算目标变量的平均值差很多。

这里使用 sklearn.metrics 中的 r2_score 来计算真实值和预测值之间的 R2 值。

看到一个新指标 R2 , 我们也要看一下他的表达式怎么写:

R2=1−TSSRSS=1−Σi=1m(yi−yˉ)2Σi=1m(yi^−yi)2

其中, yi是每一个样本真实值,yˉ是每一个样本真实值的均值,yi^是每一个样本的预测值。

In [ ]# 决定系数的公式很简单, 其实我们可以自己写一个函数def calc_r2_score(ys, preds): # preds 是预测值, ys 是真实值 return 1 - ((preds - ys)**2).sum() / ((ys - ys.mean())**2).sum()登录后复制 In [ ]

def eval(model, dataset): model.eval() # 将网络设置为验证状态 ys = list() # 存放真实值 preds = list() # 存放预测值 for i, data in enumerate(dataset()): x, y = data pred = model(x) # 获取模型输出 ys.extend(y.numpy()) preds.extend(pred.numpy()) ys = np.array(ys)[:, -1] preds = np.array(preds)[:, -1] score = r2_score(ys, preds) # 计算决定系数 return scoremodel_path = './models/bhp_49.pdparams'# 加载模型参数model_state_dict = paddle.load(model_path)model.set_state_dict(model_state_dict)# 评估训练集和测试集score_train = eval(model, train_dataloader)score_test = eval(model, test_dataloader)print('train score is {}, test score is {}'.format(score_train, score_test))登录后复制 train score is 0.7128057758618467, test score is 0.5358080939148715登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/dataloader/dataloader_iter.py:89: DeprecationWarning: `np.bool` is a deprecated alias for the builtin `bool`. To silence this warning, use `bool` by itself. Doing this will not modify any behavior and is safe. If you specifically wanted the numpy scalar type, use `np.bool_` here.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations if isinstance(slot[0], (np.ndarray, np.bool, numbers.Number)):登录后复制登录后复制

2.7 模型推理

下面我们选择一条数据样本,测试下模型的预测效果。

In [ ]def load_one_example(): # 从上边已加载的测试集中,随机选择一条作为测试数据 idx = np.random.randint(0, test_data.shape[0]) idx = -10 one_data, label = test_data[idx, :-1], test_data[idx, -1] # 修改该条数据shape为[1,13] one_data = one_data.reshape([1,-1]) return one_data, label登录后复制 In [ ]

model_dict = paddle.load('./models/bhp_49.pdparams')model.load_dict(model_dict)model.eval()# 参数为数据集的文件地址one_data, label = load_one_example()# 将数据转为动态图的variable格式 one_data = paddle.to_tensor(one_data)predict = model(one_data)# 对结果做反归一化处理predict = predict * (max_values[-1] - min_values[-1]) + avg_values[-1]# 对label数据做反归一化处理label = label * (max_values[-1] - min_values[-1]) + avg_values[-1]print("Inference result is {}, the corresponding label is {}".format(predict.numpy(), label))登录后复制 Inference result is [[16.48576]], the corresponding label is 19.700000070256788登录后复制

相关攻略

Pywinrm 通过Windows远程管理(WinRM)协议,让Python能够像操作本地一样执行远程Windows命令,真正打通了跨平台管理的最后一公里。 在混合IT环境中,Linux机器管理Wi

早些时候,聊过 Python 领域那场惊心动魄的供应链攻击。当时我就感叹,虽然我们 JavaScript 开发者对这类套路烂熟于心,但亲眼目睹这种规模的“投毒”还是头一次。 早些时候,聊过 Pyth

Toga 是 BeeWare 家族的核心成员,号称“写一次,跑遍所有平台”,而且用的是系统原生控件,不是那种一看就是网页套壳的界面 。 写了这么多年 Python,你是不是也想过:要是能一套代码跑

异常处理的核心:让错误在正确的地方被有效处理。正确的地方,就是别在底层就把异常吞了,也别在顶层还抛裸奔的 Exception。 异常处理写得好,半夜不用起来改 bug。1 你是不是也这么干过?tr

1 Skills机制概述 提起OpenClaw的Skills机制,不少人可能会把它想象成传统意义上的可执行插件。其实,它的内涵要更精妙一些。 简单说,Skills本质上是一套基于提示驱动的能力扩展机制。它并不是一个可以独立“跑”起来的程序模块,而是通过一份结构化描述文件(核心就是那个SKILL m

热门专题

热门推荐

加密货币行业翘首以盼的监管里程碑,终于有了实质性进展。美国证券交易委员会(SEC)主席保罗·阿特金斯(Paul Atkins)近日证实,那份允许加密项目在早期获得注册豁免权的“安全港”框架提案,已经正式送抵白宫,进入了最终审查阶段。 在范德堡大学与区块链协会联合举办的数字资产峰会上,阿特金斯透露了这

微策略Strategy报告:第一季录得144 6亿美元浮亏 再斥资约3 3亿美元买进4871枚比特币 市场震荡的威力有多大?看看Strategy的最新季报就明白了。根据其最新向美国证管会(SEC)提交的8-K报告,受市场剧烈波动影响,这家公司所持的比特币在第一季度录得了一笔惊人的数字——144 6亿

稳定币巨头Tether的动向,向来是加密世界的风向标。这不,它向Web3基础设施的版图扩张,又迈出了关键一步。公司执行长Paolo Ardoino在社交平台X上透露,其工程团队正在全力“烹制”一个新项目——去中心化搜索引擎 “Hypersearch”。这个消息一出,立刻引发了行业的广泛猜想。 采用D

基地位于Coinbase旗下以太坊Layer2网络Base的Seamless Protocol,日前正式宣告了服务的终结。这个曾经吸引了超过20万用户的原生DeFi借贷协议,在运营不到三年后,终究没能跑赢时间。它主打的核心产品是Integrated Leverage Markets(ILMs)——一

PAAL代币揭秘:深度解析Web3社区治理的核心钥匙 在去中心化自治组织的浪潮中,谁真正掌握了项目的话语权?PAAL代币提供了一套系统化的答案。它不仅是生态内流转的价值媒介,更是开启链上治理大门的核心凭证。通过持有并质押PAAL代币,用户能够对协议升级、资金分配乃至战略方向等关键事务投出决定性的一票