文本相似度计算比赛-预训练模型baseline,直接上90%

该文介绍百度架构师课程内置的文本相似度计算比赛方案,用ERNIE预训练模型,将文本匹配转为分类任务,拼接query和title为输入。使用54614条训练集、7802条验证集、15604条测试集,经数据处理、模型训练,首 epoch 验证集准确率超90%,无需调参,可作基线,最后输出结果为result.csv。

文本相似度计算比赛-使用预训练模型,直接上90%

比赛是百度架构师手把手带你零基础实践深度学习课程内置的比赛,似乎已经停止判分了.

训练集:54614条

验证集:7802条

测试集:15604条

本文改自『NLP经典项目集』02:使用预训练模型ERNIE优化情感分析

没有任何调参,所以作为预训练模型的baseline完全没问题

免费影视、动漫、音乐、游戏、小说资源长期稳定更新! 👉 点此立即查看 👈

1. 任务介绍

1.1 任务内容

文本语义匹配是自然语言处理中一个重要的基础问题,NLP领域的很多任务都可以抽象为文本匹配任务。例如,信息检索可以归结为查询项和文档的匹配,问答系统可以归结为问题和候选答案的匹配,对话系统可以归结为对话和回复的匹配。语义匹配在搜索优化、推荐系统、快速检索排序、智能客服上都有广泛的应用。如何提升文本匹配的准确度,是自然语言处理领域的一个重要挑战。

信息检索:在信息检索领域的很多应用中,都需要根据原文本来检索与其相似的其他文本,使用场景非常普遍。新闻推荐:通过用户刚刚浏览过的新闻标题,自动检索出其他的相似新闻,个性化地为用户做推荐,从而增强用户粘性,提升产品体验。智能客服:用户输入一个问题后,自动为用户检索出相似的问题和答案,节约人工客服的成本,提高效率。1.2 什么是文本匹配?

让我们来看一个简单的例子,比较各候选句子哪句和原句语义更相近

原句:“车头如何放置车牌”

比较句1:“前牌照怎么装”比较句2:“如何办理北京车牌”比较句3:“后牌照怎么装”(1)比较句1与原句,虽然句式和语序等存在较大差异,但是所表述的含义几乎相同

(2)比较句2与原句,虽然存在“如何” 、“车牌”等共现词,但是所表述的含义完全不同

(3)比较句3与原句,二者讨论的都是如何放置车牌的问题,只不过一个是前牌照,另一个是后牌照。二者间存在一定的语义相关性。

所以语义相关性,句1大于句3,句3大于句2.这就是语义匹配。

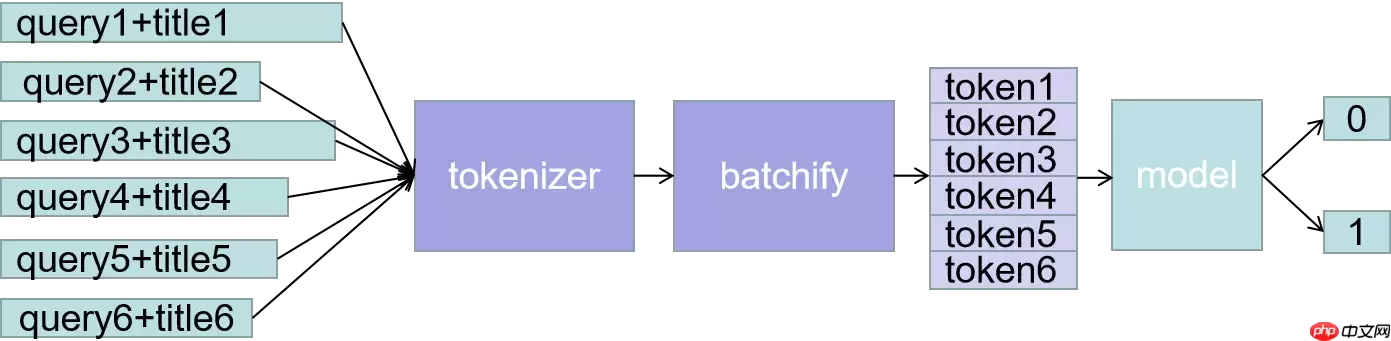

1.3 使用预训练序列分类模型

本任务本是匹配工作,两个距离相似则是1,不相似则是0.这其实也可以看做一个分类任务,两个句子是相似的,则类别为1,两个句子不相似的,则类别为0.

本文使用的是一个文本分类的例子『NLP经典项目集』02:使用预训练模型ERNIE优化情感分析

通读全文后会发现,我们的主要任务其实变成了如何构建这样一句话,这里使用最简单的做法,直接将两个句子拼接

即,query和title直接拼接。

加载第三方库,paddle和paddlenlp相关的库

In [ ]import mathimport numpy as npimport osimport collectionsfrom functools import partialimport randomimport timeimport inspectimport importlibfrom tqdm import tqdmimport paddleimport paddle.nn as nnimport paddle.nn.functional as Ffrom paddle.io import IterableDatasetfrom paddle.utils.download import get_path_from_url登录后复制

本实验需要依赖与paddlenlp,aistudio上的paddlenlp版本过低,所以需要首先升级paddlenlp

In [ ]!pip install paddlenlp --upgrade登录后复制

导入paddlenlp相关的包

In [ ]import paddlenlp as ppnlpfrom paddlenlp.data import JiebaTokenizer, Pad, Stack, Tuple, Vocab# from utils import convert_examplefrom paddlenlp.datasets import MapDatasetfrom paddle.dataset.common import md5filefrom paddlenlp.datasets import DatasetBuilder登录后复制

2. 定义模型和tokenizer

2.1 定义模型预训练

经过前面的分析,我们将两个句子拼成了一句话,然后转变成分类任务,所以这里使用序列分类模型.这里其实主要用的是model,那个ernie_model是为了帮助理解展示用的.

In [ ]MODEL_NAME = "ernie-1.0"ernie_model = ppnlp.transformers.ErnieModel.from_pretrained(MODEL_NAME)model = ppnlp.transformers.ErnieForSequenceClassification.from_pretrained(MODEL_NAME, num_classes=2)登录后复制

[2024-05-18 10:21:29,970] [ INFO] - Downloading https://paddlenlp.bj.bcebos.com/models/transformers/ernie/ernie_v1_chn_base.pdparams and saved to /home/aistudio/.paddlenlp/models/ernie-1.0[2024-05-18 10:21:29,973] [ INFO] - Downloading ernie_v1_chn_base.pdparams from https://paddlenlp.bj.bcebos.com/models/transformers/ernie/ernie_v1_chn_base.pdparams100%|██████████| 392507/392507 [00:09<00:00, 43038.06it/s][2024-05-18 10:21:45,369] [ INFO] - Weights from pretrained model not used in ErnieModel: ['cls.predictions.layer_norm.weight', 'cls.predictions.decoder_bias', 'cls.predictions.transform.bias', 'cls.predictions.transform.weight', 'cls.predictions.layer_norm.bias'][2024-05-18 10:21:45,675] [ INFO] - Already cached /home/aistudio/.paddlenlp/models/ernie-1.0/ernie_v1_chn_base.pdparams/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/dygraph/layers.py:1297: UserWarning: Skip loading for classifier.weight. classifier.weight is not found in the provided dict. warnings.warn(("Skip loading for {}. ".format(key) + str(err)))/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/dygraph/layers.py:1297: UserWarning: Skip loading for classifier.bias. classifier.bias is not found in the provided dict. warnings.warn(("Skip loading for {}. ".format(key) + str(err)))登录后复制 2.2 定义一个模型对应的tokenizer

In [ ]tokenizer = ppnlp.transformers.ErnieTokenizer.from_pretrained(MODEL_NAME)登录后复制

[2024-05-18 10:21:47,430] [ INFO] - Downloading vocab.txt from https://paddlenlp.bj.bcebos.com/models/transformers/ernie/vocab.txt100%|██████████| 90/90 [00:00<00:00, 4144.52it/s]登录后复制

按照最新例子,测试一下我们的句子吧

In [ ]tokens = tokenizer._tokenize("万家乐燃气热水器怎么样")print("Tokens: {}".format(tokens))# token映射为对应token idtokens_ids = tokenizer.convert_tokens_to_ids(tokens)print("Tokens id: {}".format(tokens_ids))# 拼接上预训练模型对应的特殊token ,如[CLS]、[SEP]tokens_ids = tokenizer.build_inputs_with_special_tokens(tokens_ids)print("Tokens id: {}".format(tokens_ids))# 转化成paddle框架数据格式tokens_pd = paddle.to_tensor([tokens_ids])print("Tokens : {}".format(tokens_pd))# 此时即可输入ERNIE模型中得到相应输出sequence_output, pooled_output = ernie_model(tokens_pd)print("Token wise output: {}, Pooled output: {}".format(sequence_output.shape, pooled_output.shape))登录后复制 Tokens: ['万', '家', '乐', '燃', '气', '热', '水', '器', '怎', '么', '样']Tokens id: [211, 50, 354, 1404, 266, 506, 101, 361, 936, 356, 314]Tokens id: [1, 211, 50, 354, 1404, 266, 506, 101, 361, 936, 356, 314, 2]Tokens : Tensor(shape=[1, 13], dtype=int64, place=CUDAPlace(0), stop_gradient=True, [[1 , 211, 50 , 354, 1404, 266, 506, 101, 361, 936, 356, 314, 2 ]])Token wise output: [1, 13, 768], Pooled output: [1, 768]登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/tensor/creation.py:143: DeprecationWarning: `np.object` is a deprecated alias for the builtin `object`. To silence this warning, use `object` by itself. Doing this will not modify any behavior and is safe. Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations if data.dtype == np.object:登录后复制 In [ ]

encoded_text = tokenizer(text="万家乐燃气热水器怎么样", max_seq_len=20)for key, value in encoded_text.items(): print("{}:\n\t{}".format(key, value))# 转化成paddle框架数据格式input_ids = paddle.to_tensor([encoded_text['input_ids']])print("input_ids : {}".format(input_ids))segment_ids = paddle.to_tensor([encoded_text['token_type_ids']])print("token_type_ids : {}".format(segment_ids))# 此时即可输入ERNIE模型中得到相应输出sequence_output, pooled_output = ernie_model(input_ids, segment_ids)print("Token wise output: {}, Pooled output: {}".format(sequence_output.shape, pooled_output.shape))登录后复制 input_ids:[1, 211, 50, 354, 1404, 266, 506, 101, 361, 936, 356, 314, 2]token_type_ids:[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0]input_ids : Tensor(shape=[1, 13], dtype=int64, place=CUDAPlace(0), stop_gradient=True, [[1 , 211, 50 , 354, 1404, 266, 506, 101, 361, 936, 356, 314, 2 ]])token_type_ids : Tensor(shape=[1, 13], dtype=int64, place=CUDAPlace(0), stop_gradient=True, [[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0]])Token wise output: [1, 13, 768], Pooled output: [1, 768]登录后复制

3. 数据读取

3.1 load_dataset函数

本实验共计需要读取四份数据: 训练集 train.tsv、验证集 dev.tsv、测试集 test.tsv 和 词汇表 vocab.txt。加载数据的代码如下: 这里是课程提供的,不需要修改

In [ ]class BAIDUData2(DatasetBuilder): SPLITS = { # 'train':os.path.join('data', 'baidu_train.tsv'), # 'dev': os.path.join('data', 'baidu_dev.tsv'), 'train': 'baidu_train.tsv', 'dev': 'baidu_dev.tsv', } def _get_data(self, mode, **kwargs): filename = self.SPLITS[mode] return filename def _read(self, filename): """读取数据""" with open(filename, 'r', encoding='utf-8') as f: head = None for line in f: data = line.strip().split("\t") if not head: head = data else: query, title, label = data yield {"query": query, "title": title, "label": label} def get_labels(self): return ["0", "1"]登录后复制 In [ ]def load_dataset(name=None, data_files=None, splits=None, lazy=None, **kwargs): reader_cls = BAIDUData2 print(reader_cls) if not name: reader_instance = reader_cls(lazy=lazy, **kwargs) else: reader_instance = reader_cls(lazy=lazy, name=name, **kwargs) datasets = reader_instance.read_datasets(data_files=data_files, splits=splits) return datasets登录后复制 In [ ]

# Loads dataset.train_ds, dev_ds = load_dataset(splits=["train", "dev"])登录后复制

登录后复制

3.2 前处理:拼接句子

主要针对我们的任务,修改convert_example函数,在这个里面,将query和title拼接,并转成token,convert_example这个在utils.py中123行

In [ ]from functools import partialfrom paddlenlp.data import Stack, Tuple, Padfrom utils import convert_example, create_dataloaderbatch_size = 32max_seq_length = 128trans_func = partial( convert_example, tokenizer=tokenizer, max_seq_length=max_seq_length)batchify_fn = lambda samples, fn=Tuple( Pad(axis=0, pad_val=tokenizer.pad_token_id), # input Pad(axis=0, pad_val=tokenizer.pad_token_type_id), # segment Stack(dtype="int64") # label): [data for data in fn(samples)]登录后复制 In [ ]

train_data_loader = create_dataloader( train_ds, mode='train', batch_size=batch_size, batchify_fn=batchify_fn, trans_fn=trans_func)dev_data_loader = create_dataloader( dev_ds, mode='dev', batch_size=batch_size, batchify_fn=batchify_fn, trans_fn=trans_func)登录后复制

4. 定义一些超参,loss,优化器等

In [ ]from paddlenlp.transformers import LinearDecayWithWarmup# 训练过程中的最大学习率learning_rate = 5e-5 # 训练轮次epochs = 4# 学习率预热比例warmup_proportion = 0.1# 权重衰减系数,类似模型正则项策略,避免模型过拟合weight_decay = 0.01num_training_steps = len(train_data_loader) * epochslr_scheduler = LinearDecayWithWarmup(learning_rate, num_training_steps, warmup_proportion)optimizer = paddle.optimizer.AdamW( learning_rate=lr_scheduler, parameters=model.parameters(), weight_decay=weight_decay, apply_decay_param_fun=lambda x: x in [ p.name for n, p in model.named_parameters() if not any(nd in n for nd in ["bias", "norm"]) ])criterion = paddle.nn.loss.CrossEntropyLoss()metric = paddle.metric.Accuracy()登录后复制

5. 开始训练,可以看到第一个epoch在eval上就上90%了

In [12]import paddle.nn.functional as Ffrom utils import evaluateglobal_step = 0for epoch in range(1, epochs + 1): for step, batch in enumerate(train_data_loader, start=1): input_ids, segment_ids, labels = batch logits = model(input_ids, segment_ids) loss = criterion(logits, labels) probs = F.softmax(logits, axis=1) correct = metric.compute(probs, labels) metric.update(correct) acc = metric.accumulate() global_step += 1 if global_step % 10 == 0 : print("global step %d, epoch: %d, batch: %d, loss: %.5f, acc: %.5f" % (global_step, epoch, step, loss, acc)) loss.backward() optimizer.step() lr_scheduler.step() optimizer.clear_grad() evaluate(model, criterion, metric, dev_data_loader)登录后复制 保存模型

In [ ]model.save_pretrained('checkpoint2')tokenizer.save_pretrained('checkpoint2')登录后复制 6.测试结果,输出csv

In [ ]from utils import predictimport pandas as pdlabel_map = {0:'0', 1:'1'}def preprocess_prediction_data(data): examples = [] for query, title in data: examples.append({"query": query, "title": title}) #print(len(examples),': ',query,"---", title) return examplestest_file = 'test_forstu.tsv'data = pd.read_csv(test_file, sep='\t')#print(data.shape)data1 = list(data.values)examples = preprocess_prediction_data(data1)登录后复制 In [ ]results = predict( model, examples, tokenizer, label_map, batch_size=batch_size)for idx, text in enumerate(examples): print('Data: {} \t Label: {}'.format(text, results[idx]))data2 = []for i in range(len(data1)): data2.extend(results[i])data['label'] = data2print(data.shape)data.to_csv('result.csv',sep='\t')登录后复制 最后提交结果就生成的result.csv文件就可以啦.

相关攻略

常见报错解析:“Access Not Configured”故障排除指南 许多开发者和团队成员在使用OpenClaw集成飞书时,都曾遭遇过一个典型的中断提示:“access not configured”(访问未配置)。该提示会明确显示您的飞书账户ID及一组唯一的配对验证码,并指出需要联系机器人所有

OpenClaw 常用指令大全与使用详解 openclaw status:此命令是查看OpenClaw系统整体健康状态的核心指令,执行后即获取服务运行状况的全面报告,是日常运维的首要诊断工具。 openclaw gateway restart:在修改网关配置后,必须运行此指令以重启网关服务,使配置文

如何通过 OpenClaw 实现 Chrome 浏览器自动化操控 在软件开发与自动化测试领域,持续学习是常态。本文旨在详细介绍如何利用 OpenClaw 连接并控制一个已开启的 Chrome 浏览器实例,实现点击、文本输入、文件上传、页面滚动、屏幕截图以及执行 JavaScript 等自动化操作。整

项目概述 你是否希望将强大的 AI 助手带入日常聊天?本教程将指导你完成搭建流程,让你能在 QQ 上直接调用 OpenClaw 智能助手,实现无门槛的 AI 对话体验。 架构说明 ┌─────────────┐ ┌──────────────┐ ┌─────────────┐ │ QQ 用户 │ ─

一 下载并安装Node js,全程保持默认设置 首先,请前往Node js官方网站的下载中心:https: nodejs org zh-cn download。根据您的操作系统(Windows Mac Linux)下载对应的安装程序。运行安装向导时,整个过程非常简单,您只需连续点击“下一步”按钮

热门专题

热门推荐

速览攻略:世界圣羽翼王核心打法与全面解析 本攻略将为你完整呈现《洛克王国》世界圣羽翼王的通关秘籍,深度剖析两种高效实战打法:追求极致速度的“燃薪虫四回合速通”与稳定输出的“酷拉无限连击流”。文章将进一步解析这位翼系精灵王的技能机制、属性克制关系及其在PVE与PVP中的实战定位,帮助你彻底掌握应对其隐

速览:工程系统核心机制解析 在《异种航员2》中,工程系统是整个抵抗力量赖以运转的“战略后勤中枢”。无论是研发新武器、生产重型装甲还是制造先进飞行器,所有实体装备的产出都依赖于此。简言之,该系统的核心运作围绕着两大关键:工程师人力的高效配置与全球稀缺资源的精细化调度。工程师的数量直接决定了每个项目的建

核心速览 在《洛克王国世界》中,治愈兔是一位兼具功能性任务角色与实战辅助能力的精灵。它的价值不仅在剧情推进中体现,更在于对战里出色的治疗与防护表现。本文将为你全面解析治愈兔的精准获取位置、种族属性特点以及实战技能搭配,助你顺利捕捉并最大化其在队伍中的作用。所有关键信息将通过清晰的图文内容详细展示,确

速览 在《红色沙漠》中,挑战传说之狼这一强大的任务BOSS,需要玩家进行充分的准备并遵循完整的任务流程。整个过程环环相扣,你必须首先参与塞莱斯特家族的势力任务,通过完成任务将家族声望提升至指定等级,才能解锁【传说之狼】的专属讨伐任务,最终直面这个传说中的强大生物。 红色沙漠传说之狼怎么打 归根结底,

【宝可梦Pokopia】舒适度全解析:快速提升环境等级的核心秘诀 你是否正在探索《宝可梦Pokopia》世界,并希望有效提升宝可梦栖息地的舒适度?舒适度不仅是衡量宝可梦快乐程度的晴雨表,更是解锁游戏核心内容、加速发展的关键驱动指标。本攻略将系统性地为你揭示提升舒适度的核心途径,涵盖从装饰栖息地、建造