paddle实现食物分类

该项目用PaddlePaddle训练CNN实现food-11数据集的11类食物分类。先解压含训练、验证、测试集的数据集,制作标签文档,继承Dataset类生成数据集。构建含3个卷积层、池化层等的CNN,用Adam优化器等训练,训练5轮后保存模型,最后测试单张图片,虽准确率不高但跑通流程。

食物图片分类

项目描述

训练一个简单的卷积神经网络,实现食物图片的分类。

免费影视、动漫、音乐、游戏、小说资源长期稳定更新! 👉 点此立即查看 👈

数据集介绍

本次使用的数据集为food-11数据集,共有11类

Bread, Dairy product, Dessert, Egg, Fried food, Meat, Noodles/Pasta, Rice, Seafood, Soup, and Vegetable/Fruit.

(面包,乳制品,甜点,鸡蛋,油炸食品,肉类,面条/意大利面,米饭,海鲜,汤,蔬菜/水果)

Training set: 9866张

Validation set: 3430张

Testing set: 3347张

数据格式 下载 zip 档后解压缩会有三个资料夹,分别为training、validation 以及 testing

training 以及 validation 中的照片名称格式为 [类别]_[编号].webp,例如 3_100.webp 即为类别 3 的照片(编号不重要)

实现方式

paddlepaddle

思路方法

解压数据集

查看数据内容:training、validation 和 testing文件夹

类似于work/food-11/training/0_101.webp 文件

[类别]_[编号].webp

制作图片和标签文档

继承paddle.io.dataset类生成数据集

创建CNN网络

训练数据

测试数据

说明:

本项目素材和内容源于李宏毅老师课程,但是是使用飞桨实现的!

本项目仅仅是跑通了,对于准确率没有要求和细细琢磨,仅供参考!

# !unzip -d work data/data75768/food-11.zip # 解压缩food-11数据集登录后复制 In [ ]

import paddleprint(f'当前Paddle版本:{paddle.__version__}')登录后复制 当前Paddle版本:2.0.1登录后复制 In [ ]

import osimport paddleimport paddle.vision.transforms as Timport numpy as npfrom PIL import Imageimport paddle.nn.functional as F登录后复制 In [ ]

data_path = '/home/aistudio/work/food-11/' # 设置初始文件地址character_folders = os.listdir(data_path) # 查看地址下文件夹character_folders登录后复制

['testing', 'validation', 'training']登录后复制 In [ ]

data = '10_alksn'data[0:data.rfind('_', 1)] # 判断_位置并截取下划线前面数据登录后复制 '10'登录后复制 In [ ]

# 新建标签列表if(os.path.exists('./training_set.txt')): # 判断有误文件 os.remove('./training_set.txt') # 删除文件if(os.path.exists('./validation_set.txt')): os.remove('./validation_set.txt')if(os.path.exists('./testing_set.txt')): os.remove('./testing_set.txt')for character_folder in character_folders: # 循环文件夹列表 with open(f'./{character_folder}_set.txt', 'a') as f_train: # 新建文档以追加的形式写入 character_imgs = os.listdir(os.path.join(data_path,character_folder)) # 读取文件夹下面的内容 count = 0 if character_folder in 'testing': # 检查是否是训练集 for img in character_imgs: # 循环列表 f_train.write(os.path.join(data_path,character_folder,img) + '\n') # 把地址写入文档 count += 1 print(character_folder,count) # 输出文件夹及图片数量 else: for img in character_imgs: f_train.write(os.path.join(data_path,character_folder,img) + '\t' + img[0:img.rfind('_', 1)] + '\n') # 写入地址及标签 count += 1 print(character_folder,count)登录后复制 testing 3347validation 3430training 9866登录后复制

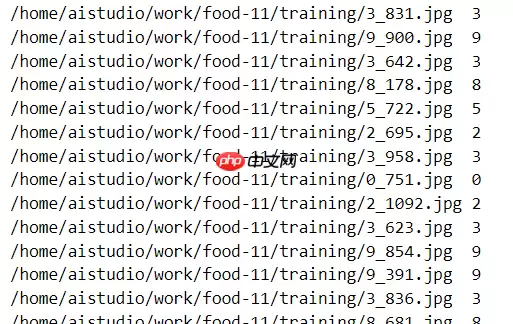

训练集和验证集样式:

测试集:

# 测验下面类中__init__输出内容with open(f'training_set.txt') as f: # 查看文件内容 for line in f.readlines(): # 逐行读取 info = line.strip().split('\t') # 以\t为切换符生成列表 # print(info) if len(info) > 0: # 列表不为空 print([info[0].strip(), info[1].strip()]) # 输出内容 break登录后复制 ['/home/aistudio/work/food-11/training/2_1043.webp', '2']登录后复制 In [15]

# 继承paddle.io.Dataset对数据集做处理class FoodDataset(paddle.io.Dataset): """ 数据集类的定义(注释见上方) """ def __init__(self, mode='training_set'): """ 初始化函数 """ self.data = [] with open(f'{mode}_set.txt') as f: for line in f.readlines(): info = line.strip().split('\t') if len(info) > 0: self.data.append([info[0].strip(), info[1].strip()]) def __getitem__(self, index): """ 读取图片,对图片进行归一化处理,返回图片和 标签 """ image_file, label = self.data[index] # 获取数据 img = Image.open(image_file) # 读取图片 img = img.resize((100, 100), Image.ANTIALIAS) # 图片大小样式归一化 img = np.array(img).astype('float32') # 转换成数组类型浮点型32位 img = img.transpose((2, 0, 1)) #读出来的图像是rgb,rgb,rbg..., 转置为 rrr...,ggg...,bbb... img = img/255.0 # 数据缩放到0-1的范围 return img, np.array(label, dtype='int64') def __len__(self): """ 获取样本总数 """ return len(self.data)登录后复制 In [16]# 训练的数据提供器train_dataset = FoodDataset(mode='training')# 测试的数据提供器eval_dataset = FoodDataset(mode='validation')# 查看训练和测试数据的大小print('train大小:', train_dataset.__len__())print('eval大小:', eval_dataset.__len__())# 查看图片数据、大小及标签for data, label in train_dataset: print(data) print(np.array(data).shape) print(label) break登录后复制 train大小: 9866eval大小: 3430[[[0.30588236 0.2509804 0.1882353 ... 0.19607843 0.19607843 0.19215687] [0.23921569 0.19607843 0.13725491 ... 0.19607843 0.1882353 0.18039216] [0.02352941 0.01176471 0.00392157 ... 0.19607843 0.18039216 0.1764706 ] ... [0.50980395 0.5137255 0.5176471 ... 0.5372549 0.5372549 0.52156866] [0.5137255 0.5137255 0.5137255 ... 0.5411765 0.53333336 0.5176471 ] [0.5176471 0.5137255 0.50980395 ... 0.52156866 0.5176471 0.5176471 ]] [[0.27058825 0.21568628 0.15294118 ... 0.00392157 0. 0. ] [0.20392157 0.14117648 0.09411765 ... 0.00392157 0. 0. ] [0.01568628 0.00784314 0.00392157 ... 0.00784314 0.00392157 0. ] ... [0.45490196 0.45882353 0.45882353 ... 0.4862745 0.47843137 0.4745098 ] [0.45490196 0.45882353 0.4627451 ... 0.47843137 0.46666667 0.46666667] [0.4509804 0.45490196 0.45882353 ... 0.47058824 0.46666667 0.45882353]] [[0.14509805 0.12156863 0.05098039 ... 0.00392157 0.00784314 0.00392157] [0.06666667 0.04313726 0.01960784 ... 0.00392157 0.00392157 0. ] [0. 0.00784314 0.00784314 ... 0.00784314 0.00392157 0.00392157] ... [0.33333334 0.32941177 0.33333334 ... 0.4 0.40392157 0.40784314] [0.32941177 0.32941177 0.32941177 ... 0.4 0.4 0.3882353 ] [0.3137255 0.33333334 0.3372549 ... 0.39215687 0.39607844 0.38431373]]](3, 100, 100)2登录后复制

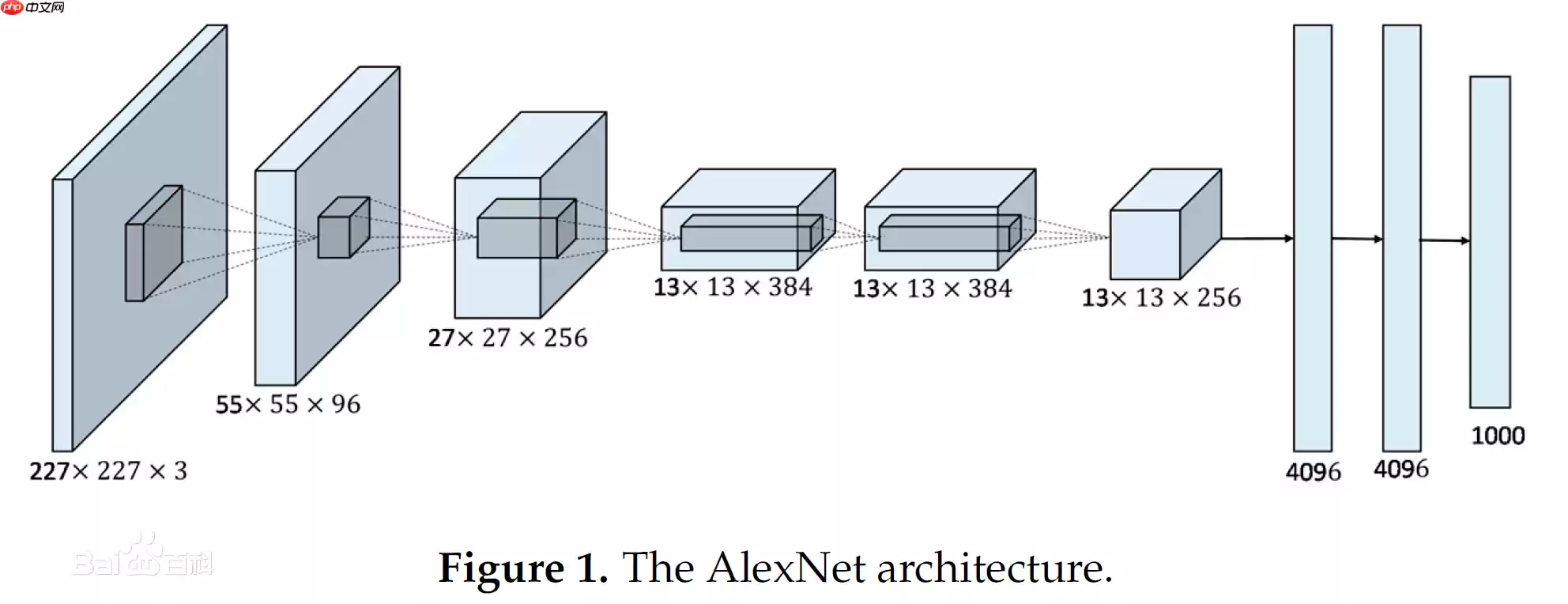

卷积神经网络示意图

# 继承paddle.nn.Layer类,用于搭建模型class MyCNN(paddle.nn.Layer): def __init__(self): super(MyCNN,self).__init__() self.conv0 = paddle.nn.Conv2D(in_channels=3, out_channels=20, kernel_size=5, padding=0) # 二维卷积层 self.pool0 = paddle.nn.MaxPool2D(kernel_size =2, stride =2) # 最大池化层 self._batch_norm_0 = paddle.nn.BatchNorm2D(num_features = 20) # 归一层 self.conv1 = paddle.nn.Conv2D(in_channels=20, out_channels=50, kernel_size=5, padding=0) self.pool1 = paddle.nn.MaxPool2D(kernel_size =2, stride =2) self._batch_norm_1 = paddle.nn.BatchNorm2D(num_features = 50) self.conv2 = paddle.nn.Conv2D(in_channels=50, out_channels=50, kernel_size=5, padding=0) self.pool2 = paddle.nn.MaxPool2D(kernel_size =2, stride =2) self.fc1 = paddle.nn.Linear(in_features=4050, out_features=218) # 线性层 self.fc2 = paddle.nn.Linear(in_features=218, out_features=100) self.fc3 = paddle.nn.Linear(in_features=100, out_features=11) def forward(self,input): # 将输入数据的样子该变成[1,3,100,100] input = paddle.reshape(input,shape=[-1,3,100,100]) # 转换维读 # print(input.shape) x = self.conv0(input) #数据输入卷积层 x = F.relu(x) # 激活层 x = self.pool0(x) # 池化层 x = self._batch_norm_0(x) # 归一层 x = self.conv1(x) x = F.relu(x) x = self.pool1(x) x = self._batch_norm_1(x) x = self.conv2(x) x = F.relu(x) x = self.pool2(x) x = paddle.reshape(x, [x.shape[0], -1]) # print(x.shape) x = self.fc1(x) # 线性层 x = F.relu(x) x = self.fc2(x) x = F.relu(x) x = self.fc3(x) y = F.softmax(x) # 分类器 return y登录后复制 In [19]

network = MyCNN() # 模型实例化paddle.summary(network, (1,3,100,100)) # 模型结构查看登录后复制

--------------------------------------------------------------------------- Layer (type) Input Shape Output Shape Param # =========================================================================== Conv2D-1 [[1, 3, 100, 100]] [1, 20, 96, 96] 1,520 MaxPool2D-1 [[1, 20, 96, 96]] [1, 20, 48, 48] 0 BatchNorm2D-1 [[1, 20, 48, 48]] [1, 20, 48, 48] 80 Conv2D-2 [[1, 20, 48, 48]] [1, 50, 44, 44] 25,050 MaxPool2D-2 [[1, 50, 44, 44]] [1, 50, 22, 22] 0 BatchNorm2D-2 [[1, 50, 22, 22]] [1, 50, 22, 22] 200 Conv2D-3 [[1, 50, 22, 22]] [1, 50, 18, 18] 62,550 MaxPool2D-3 [[1, 50, 18, 18]] [1, 50, 9, 9] 0 Linear-1 [[1, 4050]] [1, 218] 883,118 Linear-2 [[1, 218]] [1, 100] 21,900 Linear-3 [[1, 100]] [1, 11] 1,111 ===========================================================================Total params: 995,529Trainable params: 995,249Non-trainable params: 280---------------------------------------------------------------------------Input size (MB): 0.11Forward/backward pass size (MB): 3.37Params size (MB): 3.80Estimated Total Size (MB): 7.29---------------------------------------------------------------------------登录后复制

{'total_params': 995529, 'trainable_params': 995249}登录后复制 In [21]model = paddle.Model(network) # 模型封装# 配置优化器、损失函数、评估指标model.prepare(paddle.optimizer.Adam(learning_rate=0.0001, parameters=model.parameters()), paddle.nn.CrossEntropyLoss(), paddle.metric.Accuracy())# 训练可视化VisualDL工具的回调函数visualdl = paddle.callbacks.VisualDL(log_dir='visualdl_log') # 启动模型全流程训练model.fit(train_dataset, # 训练数据集 eval_dataset, # 评估数据集 epochs=5, # 训练的总轮次 batch_size=64, # 训练使用的批大小 verbose=1, # 日志展示形式 callbacks=[visualdl]) # 设置可视化登录后复制

The loss value printed in the log is the current step, and the metric is the average value of previous step.Epoch 1/5step 155/155 [==============================] - loss: 2.5430 - acc: 0.1008 - 473ms/step Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 54/54 [==============================] - loss: 2.5167 - acc: 0.1055 - 557ms/step Eval samples: 3430Epoch 2/5step 155/155 [==============================] - loss: 2.4430 - acc: 0.1008 - 473ms/step Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 54/54 [==============================] - loss: 2.5167 - acc: 0.1055 - 559ms/step Eval samples: 3430Epoch 3/5step 155/155 [==============================] - loss: 2.4430 - acc: 0.1008 - 475ms/step Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 54/54 [==============================] - loss: 2.5167 - acc: 0.1055 - 558ms/step Eval samples: 3430Epoch 4/5step 155/155 [==============================] - loss: 2.5430 - acc: 0.1008 - 474ms/step Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 54/54 [==============================] - loss: 2.5167 - acc: 0.1055 - 560ms/step Eval samples: 3430Epoch 5/5step 155/155 [==============================] - loss: 2.4430 - acc: 0.1008 - 474ms/step Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 54/54 [==============================] - loss: 2.5167 - acc: 0.1055 - 557ms/step Eval samples: 3430登录后复制 In [29]

model.save('finetuning/mnist') # 保存模型登录后复制 In [26]def openimg(): # 读取图片函数 with open(f'testing_set.txt') as f: #读取文件夹 test_img = [] txt = [] for line in f.readlines(): # 循环读取每一行 img = Image.open(line[:-1]) # 打开图片 img = img.resize((100, 100), Image.ANTIALIAS) # 大小归一化 img = np.array(img).astype('float32') # 转换成 数组 img = img.transpose((2, 0, 1)) #读出来的图像是rgb,rgb,rbg..., 转置为 rrr...,ggg...,bbb... img = img/255.0 # 缩放 txt.append(line[:-1]) # 生成列表 test_img.append(img) return txt,test_imgimg_path, img = openimg() # 读取列表登录后复制 In [33]from PIL import Imagesite = 255 # 读取图片位置model_state_dict = paddle.load('finetuning/mnist.pdparams') # 读取模型model = MyCNN() # 实例化模型model.set_state_dict(model_state_dict) model.eval()ceshi = model(paddle.to_tensor(img[site])) # 测试print('预测的结果为:', np.argmax(ceshi.numpy())) # 获取值Image.open(img_path[site]) # 显示图片登录后复制 预测的结果为: 0登录后复制

登录后复制

相关攻略

IT之家 4 月 4 日消息,国外维修团队 iFixit 昨日发布视频,拆解苹果 AirPods Max 2,发现其内部结构与初代产品高度相似,可沿用旧版拆解手册。IT之家附上相关拆解视频如下:iF

每日经济新闻4月1日消息 当地时间3月31日,被视为OpenAI最强竞争对手的Anthropic再次遭遇代码泄露事件,是其在一周内遭遇的第二起重大数据失误事件。Anthropic因npm包打包失误,

IT之家 3 月 31 日消息,据《滚石》杂志的深度调查显示,AI 生成工具正迅速渗透专业音乐制作领域,但整个行业却对此讳莫如深。今年早些时候,Suno 首席执行官米奇 · 舒尔曼接受《卫报》采访时

克雷西 发自 凹非寺量子位 | 公众号 QbitAIAI进入营销行业,已经是定局。艾瑞咨询报告显示,去年中国AI营销市场规模达669亿元,年复合增长率26 2%这个增速背后,是整个行业链条——从内容

3月31日,苹果于今日凌晨开始分批推送国行Apple Intelligence Beta版,需升级至iOS 26 4及以上系统方可体验。彭博社记者马克·古尔曼今日发文称Apple Intellig

热门专题

热门推荐

任天堂吉祥物马里奥的宿敌酷霸王解析:为何这位反派深受喜爱?宫本茂通过电影揭示角色深层魅力 谈到任天堂的经典形象,马里奥与酷霸王这对宿敌的组合可谓深入人心。一边是永不放弃拯救碧姬公主的英雄,另一边则是不断制造混乱的恶棍,故事框架虽简单却历经三十余年依然人气不减。但仔细品味,酷霸王这个角色颇为值得玩味:

洛克王国神圣狮鹫图鉴:揭秘悬崖之王的飞行奥秘 当冒险者们踏上洛克王国的高耸悬崖,便能感受到猛烈的疾风。呼啸而过的气流远超平地的强度,然而正是这片常年不息的风域,成为了狮鹫一族最卓越的自然训练场。在这个独特的环境中,它们锤炼出了对抗强风与复杂气流的顶级飞行技巧,其背后的生存智慧,实在值得探险者们深入探

4月2日消息,三星电子最新表示,自2019年起连续七年位居全球第一。根据三星援引的市场调研公司IDC数据,2025年三星电子在全球游戏电竞显示器市场的收入占比达到18 9%。从销量来看,2025年三

内存市场因为人工智能高带宽内存的蓬勃需求而陷入供应紧张,传统内存也因大量产线被占用而供不应求。在这种大背景下,苹果似乎采取了一种争议性的商业手段,来进一步扩大其市场份额。据韩国消息人士透露,苹果公司

4月6日消息,近期内存市场风声鹤唳,现货价格小幅回调就引发了内存价格崩盘”的论调,甚至带动相关个股集体下跌,但行业龙头三星却完全不为所动,反而按计划继续上调DRAM内存产品价格,用实际行动打破了市场