一文搞懂Paddle2.0中的学习率

本项目围绕深度学习中重要的学习率超参数展开,旨在掌握Paddle2.0学习率API使用、分析不同学习率性能及尝试自定义学习率。使用蜜蜂黄蜂分类数据集,对Paddle2.0的13种自带学习率及自定义的CLR、Adjust_lr进行训练测试,结果显示自定义CLR效果最佳,LinearWarmup等也值得尝试。

1. 项目简介

对于深度学习中的学习率是一个重要的超参数。在本项目中我们将从三个不同方面来理解学习率:

免费影视、动漫、音乐、游戏、小说资源长期稳定更新! 👉 点此立即查看 👈

学会Paddle2.0中自带的学习率API的使用方法。分析不同学习率的性能优劣。尝试通过自定义学习率来复现目前Paddle中没有的学习率算法。那么什么是学习率呢?

我们知道,深度学习的目标是找到可以满足我们任务的函数,而要找到这个函数就需要确定这个函数的参数。为了达到这个目的我们需要首先设计 一个损失函数,然后让我们的数据输入到待确定参数的备选函数中时这个损失函数可以有最小值。所以,我们就要不断的调整备选函数的参数值,这个调整的过程就是所谓的梯度下降。而参数值调整的幅度大小可以由学习率来控制。由此可见,学习率是深度学习中非常重要的一个概念。值得庆幸的是,深度学习框架Paddle提供了很多可以拿来就用的学习率函数。本项目就来研究一下这些Paddle中的学习率函数。对于想要自力更生设计学习率函数的同学,本项目也提供了如何进行自定义学习率函数供参考交流。

2. 项目中用到的数据集

本项目使用的数据集是蜜蜂黄蜂分类数据集。包含4个类别:蜜蜂、黄蜂、其它昆虫和其它类别。 共7939张图片,其中蜜蜂3183张,黄蜂4943张,其它昆虫2439张,其它类别856张

2.1 解压缩数据集

In [ ]!unzip -q data/data65386/beesAndwasps.zip -d work/dataset登录后复制

2.2 查看图片

In [ ]import osimport randomfrom matplotlib import pyplot as pltfrom PIL import Imageimgs = []paths = os.listdir('work/dataset')for path in paths: img_path = os.path.join('work/dataset', path) if os.path.isdir(img_path): img_paths = os.listdir(img_path) img = Image.open(os.path.join(img_path, random.choice(img_paths))) imgs.append((img, path))f, ax = plt.subplots(2, 3, figsize=(12,12))for i, img in enumerate(imgs[:]): ax[i//3, i%3].imshow(img[0]) ax[i//3, i%3].axis('off') ax[i//3, i%3].set_title('label: %s' % img[1])plt.show()plt.show()登录后复制登录后复制

2.3 数据预处理

In [ ]!python code/preprocess.py登录后复制

finished data preprocessing登录后复制

3. Paddle2.0中自带的学习率

Paddle2.0 中定义了如下13种不同的学习率算法。本项目在比较不同的学习率算法性能时将采用相同的优化器算法Momentum。

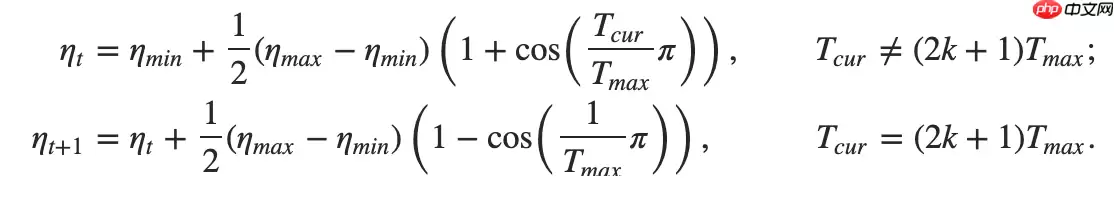

3.1 使用余弦退火学习率

使用方法为:paddle.optimizer.lr.CosineAnnealingDecay(learning_rate, T_max, eta_min=0, last_epoch=- 1, verbose=False)

该算法来自于论文SGDR: Stochastic Gradient Descent with Warm Restarts。

这差不多是最有名的学习率算法了。

更新公式如下:

In [1]

In [1]## 开始训练!python code/train.py --lr 'CosineAnnealingDecay'登录后复制

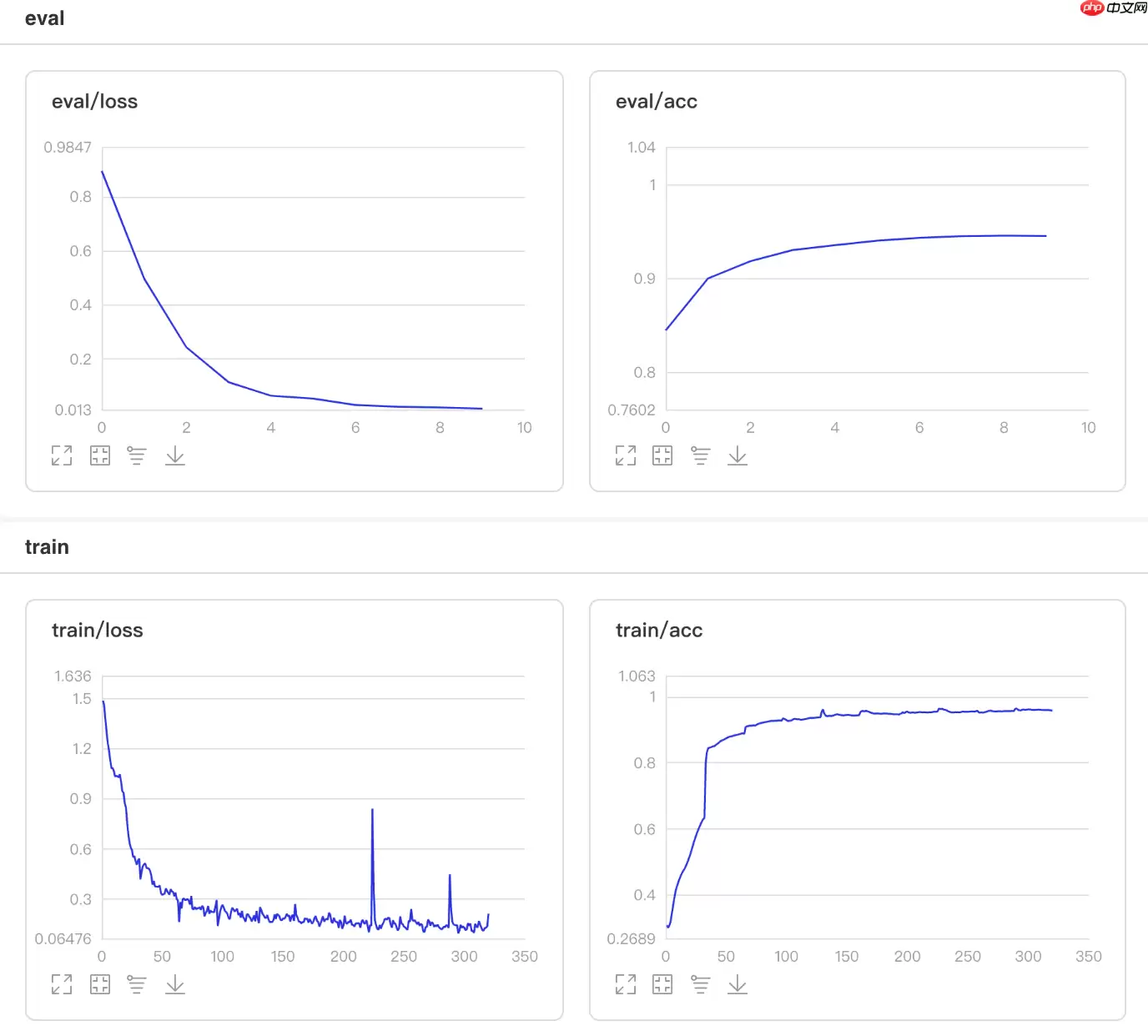

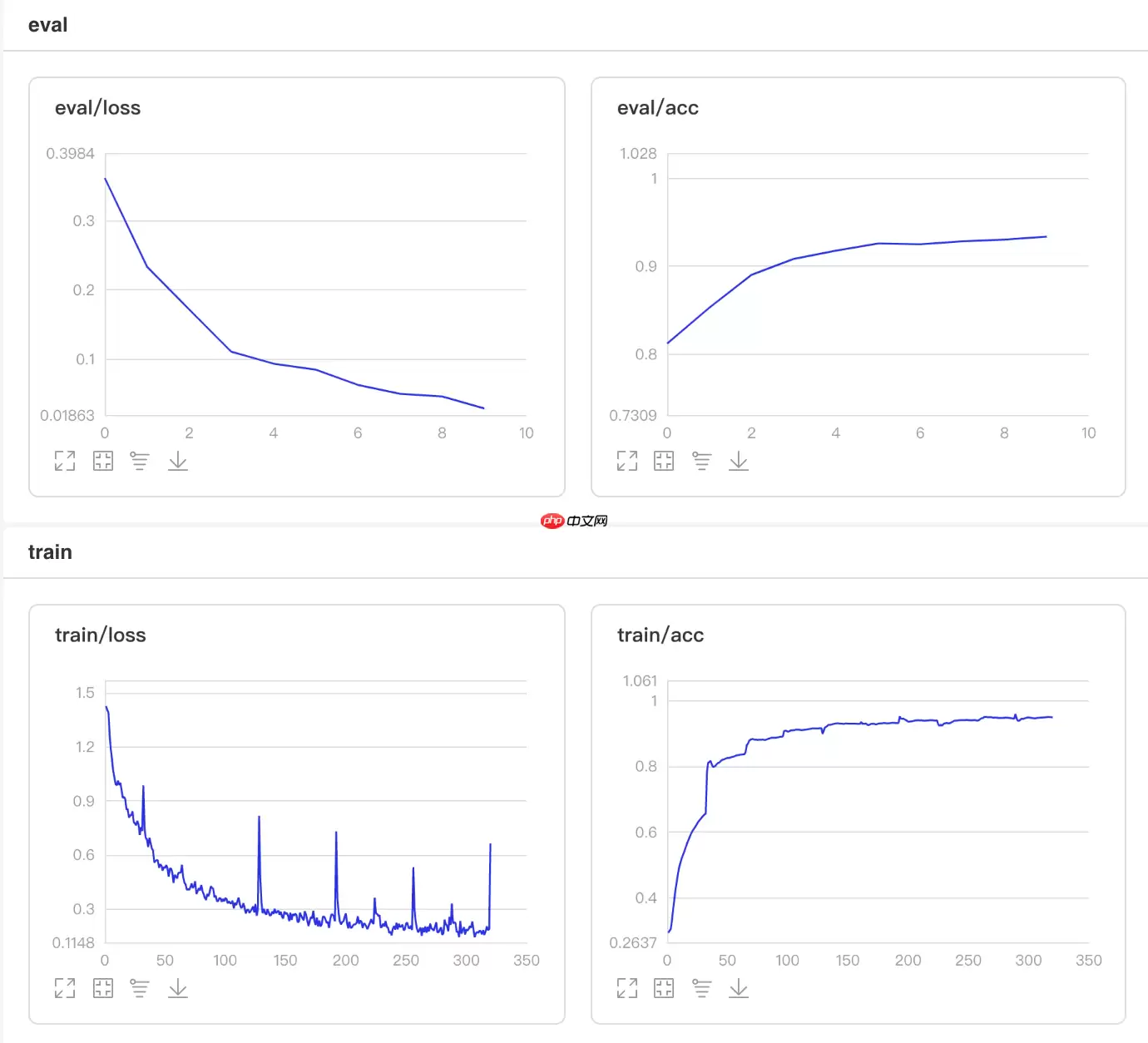

可视化结果

图1 CosineAnnealingDecay训练验证图

In [13]## 查看测试集上的效果!python code/test.py --lr 'CosineAnnealingDecay'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 22:14:08.330015 4460 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0508 22:14:08.335014 4460 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.9472 - 734ms/step Eval samples: 1763登录后复制

3.2 使用指数衰减学习率

使用方法为:paddle.optimizer.lr.ExponentialDecay(learning_rate, gamma, last_epoch=-1, verbose=False)

该接口提供一种学习率按指数函数衰减的策略。

更新公式如下:

In [2]

In [2]## 开始训练!python code/train.py --lr 'ExponentialDecay'登录后复制

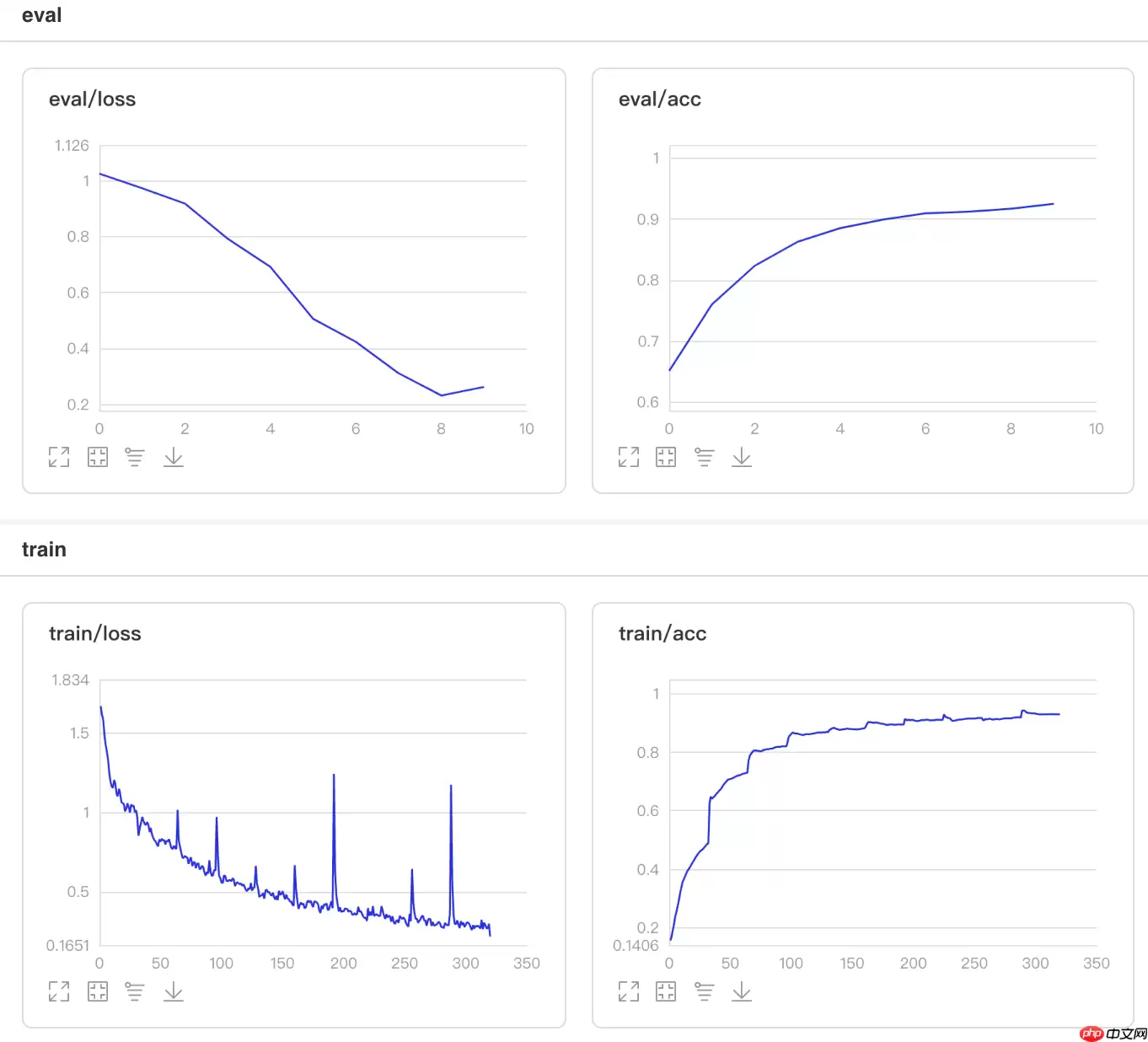

可视化结果

图2 ExponentialDecay训练验证图

In [12]## 查看测试集上的效果!python code/test.py --lr 'ExponentialDecay'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 22:11:58.334306 4184 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0508 22:11:58.339387 4184 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.7686 - 854ms/step Eval samples: 1763登录后复制

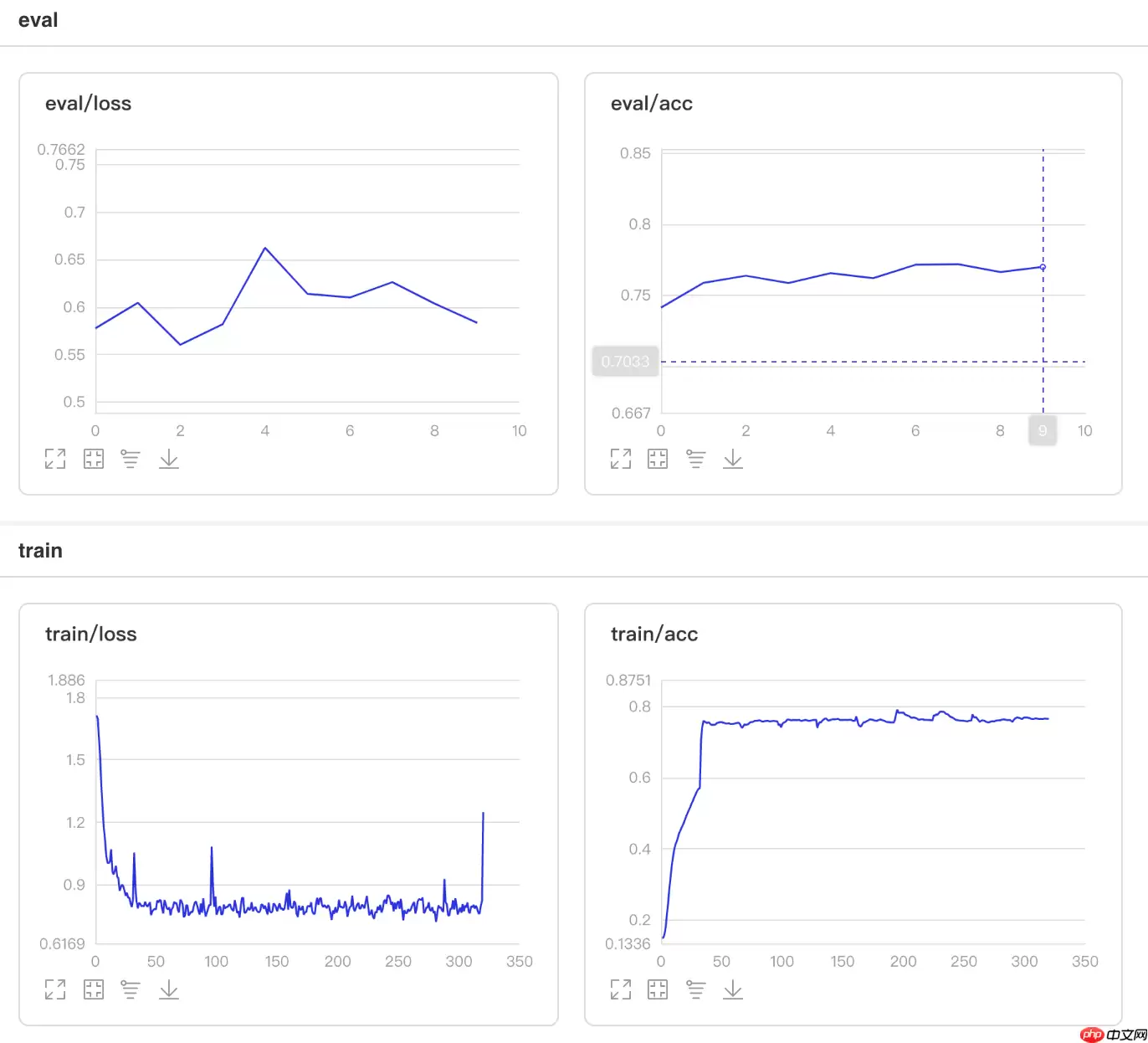

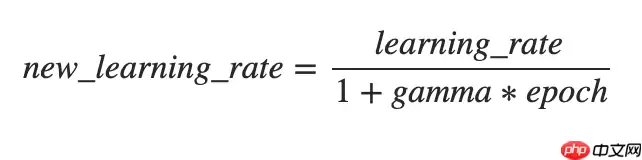

3.3 使用逆时间衰减学习率

使用方法为:paddle.optimizer.lr.InverseTimeDecay(learning_rate, gamma, last_epoch=- 1, verbose=False)

该接口提供逆时间衰减学习率的策略,即学习率与当前衰减次数成反比。

更新公式如下:

In [3]

In [3]## 开始训练!python code/train.py --lr 'InverseTimeDecay'登录后复制

可视化结果

图3 InverseTimeDecay训练验证图

In [10]## 查看测试集上的效果!python code/test.py --lr 'InverseTimeDecay'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 22:07:21.973084 3618 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0508 22:07:21.977885 3618 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.9183 - 729ms/step Eval samples: 1763登录后复制

3.4 使用Lambda衰减学习率

使用方法为:paddle.optimizer.lr.LambdaDecay(learning_rate, lr_lambda, last_epoch=-1, verbose=False)

该接口提供lambda函数设置学习率的策略,lr_lambda 为一个lambda函数,其通过epoch计算出一个因子,该因子会乘以初始学习率。

更新公式如下:

lr_lambda = lambda epoch: 0.95 ** epoch登录后复制In [4]

## 开始训练!python code/train.py --lr 'LambdaDecay'登录后复制

可视化结果

图4 LambdaDecay训练验证图

In [9]## 查看测试集上的效果!python code/test.py --lr 'LambdaDecay'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 22:04:35.426102 3249 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0508 22:04:35.431219 3249 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.8667 - 731ms/step Eval samples: 1763登录后复制

3.5 使用线性热身学习率

使用方法为:paddle.optimizer.lr.LinearWarmup(learing_rate, warmup_steps, start_lr, end_lr, last_epoch=-1, verbose=False)

该算法来自于论文SGDR: Stochastic Gradient Descent with Warm Restarts。

该接口提供一种学习率优化策略-线性学习率热身(warm up)对学习率进行初步调整。在正常调整学习率之前,先逐步增大学习率。

热身时,学习率更新公式如下:

In [5]

In [5]## 开始训练!python code/train.py --lr 'LinearWarmup'登录后复制

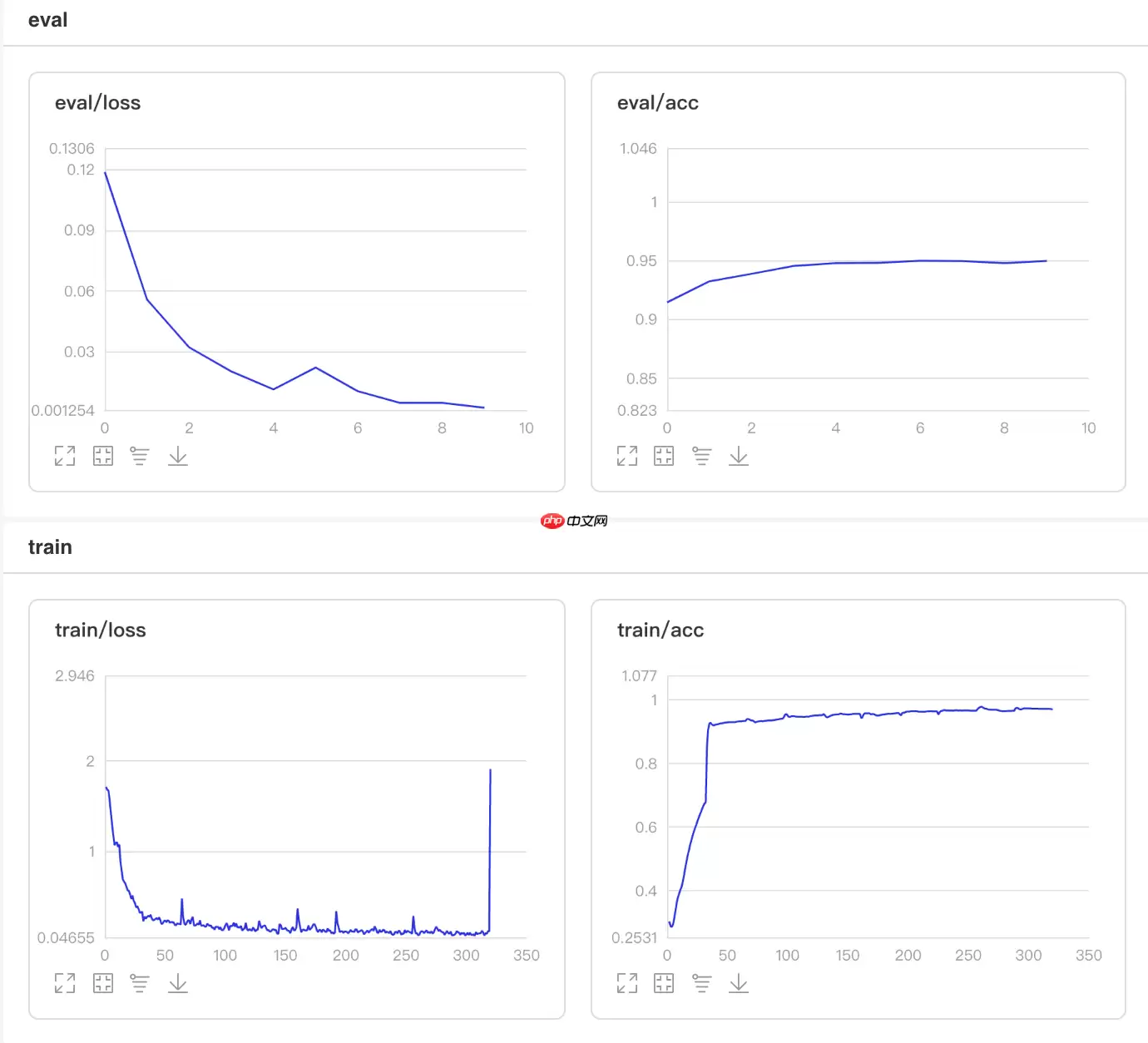

可视化结果

图5 LinearWarmup训练验证图

In [8]## 查看测试集上的效果!python code/test.py --lr 'LinearWarmup'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 22:02:36.454607 2972 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0508 22:02:36.459585 2972 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.9563 - 760ms/step Eval samples: 1763登录后复制

3.6 使用多阶衰减学习率

使用方法为:paddle.optimizer.lr.MultiStepDecay(learning_rate, milestones, gamma=0.1, last_epoch=- 1, verbose=False)

该接口提供一种学习率按指定轮数进行衰减的策略。

学习率更新公式如下:

In [6]

In [6]## 开始训练!python code/train.py --lr 'MultiStepDecay'登录后复制

可视化结果

图6 MultiStepDecay训练验证图

In [7]## 查看测试集上的效果!python code/test.py --lr 'MultiStepDecay'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 21:59:52.387367 2630 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0508 21:59:52.392359 2630 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.6982 - 736ms/step Eval samples: 1763登录后复制

3.7 使用自然指数衰减学习率

使用方法为:paddle.optimizer.lr.NaturalExpDecay(learning_rate, gamma, last_epoch=-1, verbose=False)

该接口提供按自然指数衰减学习率的策略。

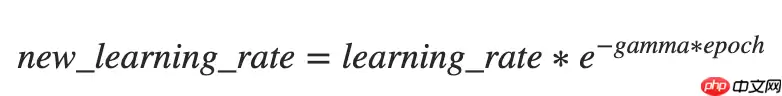

学习率更新公式如下:

In [7]

In [7]## 开始训练!python code/train.py --lr 'NaturalExpDecay'登录后复制

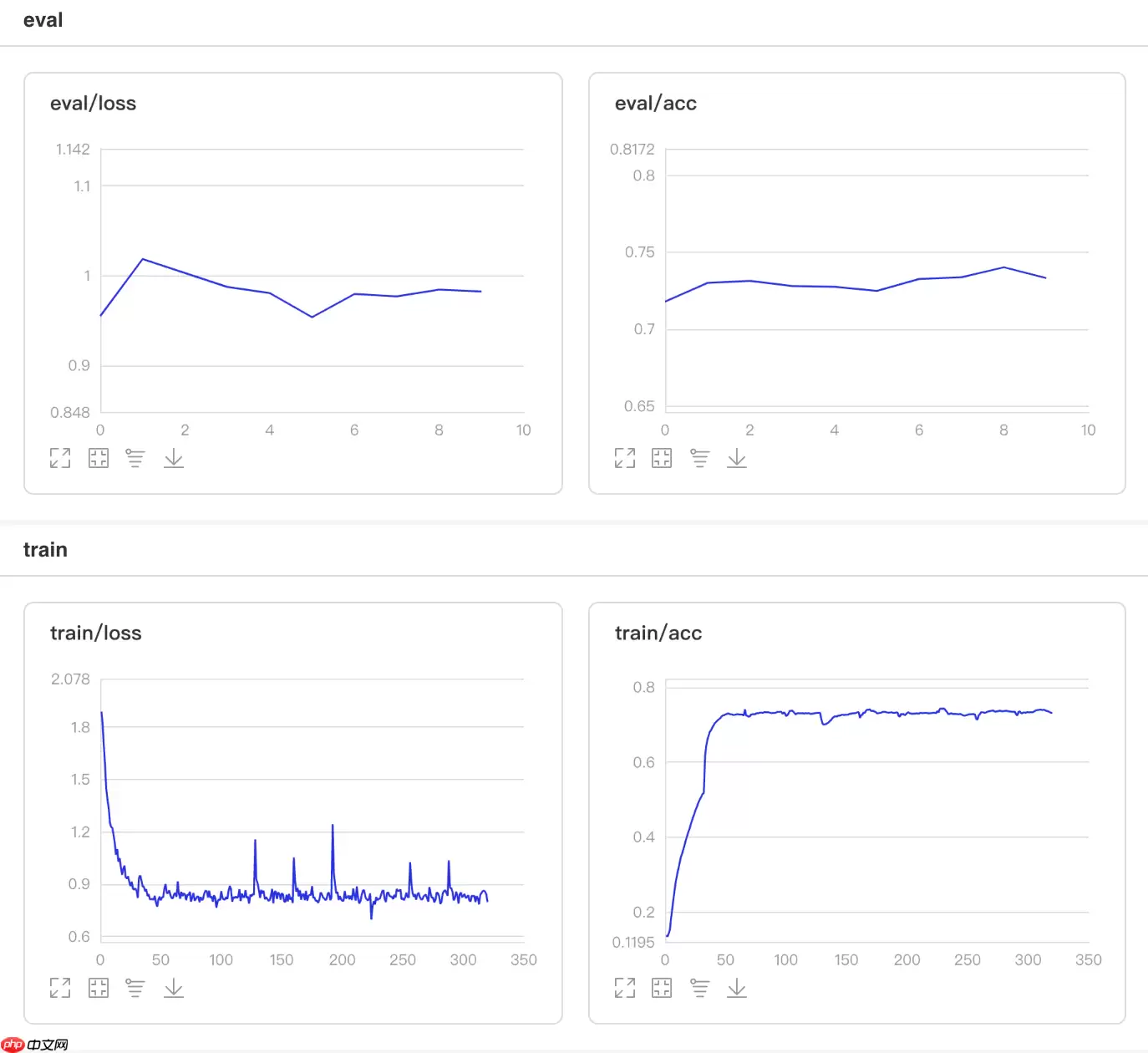

可视化结果

图7 NaturalExpDecay训练验证图

In [6]## 查看测试集上的效果!python code/test.py --lr 'NaturalExpDecay'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 21:57:41.858023 2325 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0508 21:57:41.863117 2325 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.7379 - 726ms/step Eval samples: 1763登录后复制

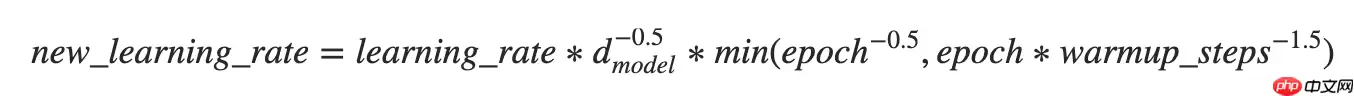

3.8 使用Noam衰减学习率

使用方法为:paddle.optimizer.lr.NoamDecay(d_model, warmup_steps, learning_rate=1.0, last_epoch=-1, verbose=False)

该接口提供Noam衰减学习率的策略。

学习率更新公式如下:

In [8]

In [8]## 开始训练!python code/train.py --lr 'NoamDecay'登录后复制

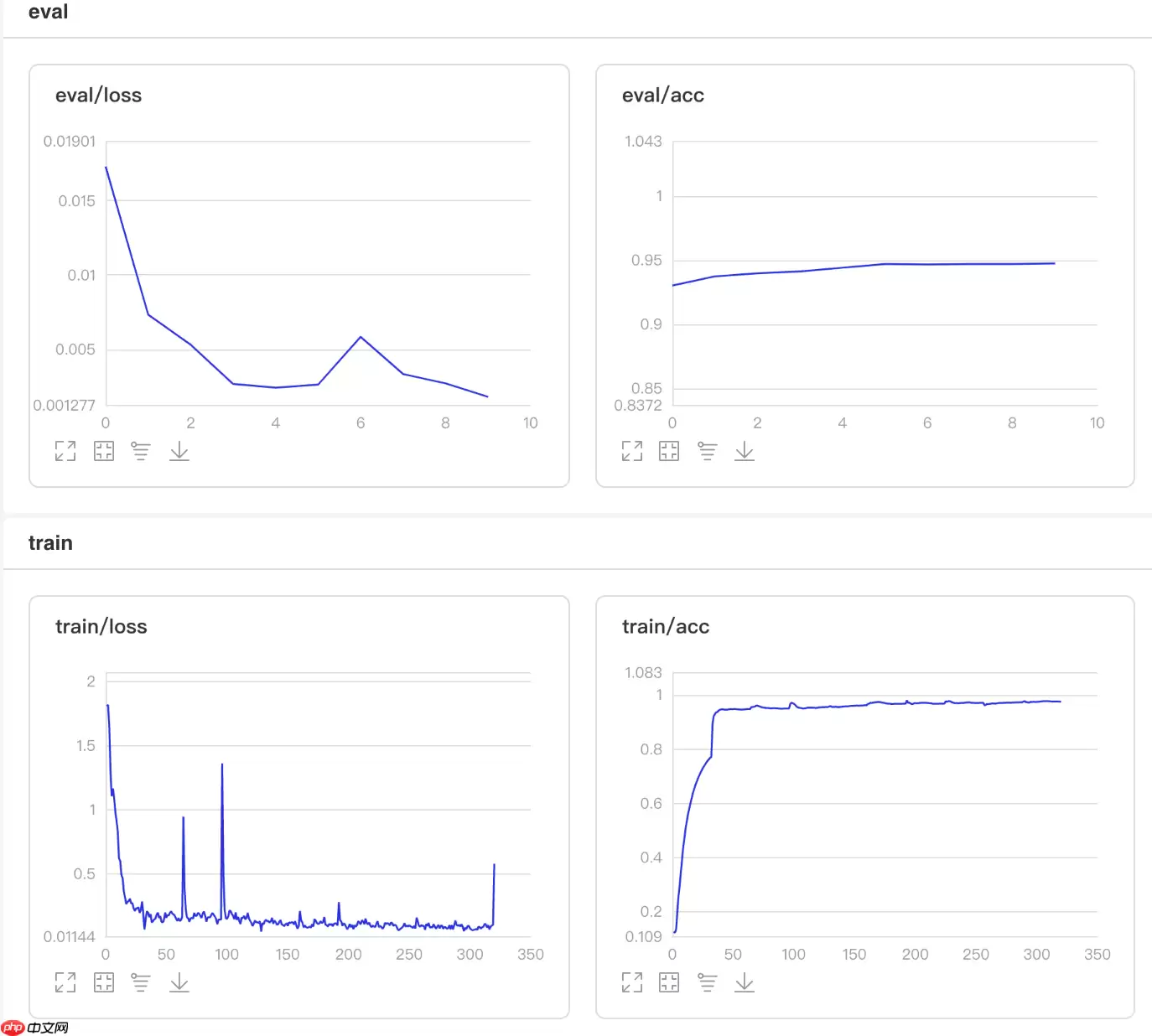

可视化结果

图8 NoamDecay训练验证图

In [5]## 查看测试集上的效果!python code/test.py --lr 'NoamDecay'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 21:55:24.339512 1995 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0508 21:55:24.344694 1995 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.9490 - 749ms/step Eval samples: 1763登录后复制

3.9 使用分段衰减学习率

使用方法为:paddle.optimizer.lr.PiecewiseDecay(boundaries, values, last_epoch=-1, verbose=False)

该接口提供分段设置学习率的策略。

In [9]## 开始训练!python code/train.py --lr 'PiecewiseDecay'登录后复制

可视化结果

图9 PiecewiseDecay训练验证图

In [4]## 查看测试集上的效果!python code/test.py --lr 'PiecewiseDecay'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 21:52:38.228776 1695 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0508 21:52:38.233954 1695 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.9416 - 749ms/step Eval samples: 1763登录后复制

3.10 使用多项式衰减学习率

使用方法为:paddle.optimizer.lr.PolynomialDecay(learning_rate, decay_steps, end_lr=0.0001, power=1.0, cycle=False, last_epoch=-1, verbose=False)

该接口提供学习率按多项式衰减的策略。通过多项式衰减函数,使得学习率值逐步从初始的learning_rate衰减到 end_lr。

In [10]## 开始训练!python code/train.py --lr 'PolynomialDecay'登录后复制

可视化结果

图10 PolynomialDecay训练验证图

In [3]## 查看测试集上的效果!python code/test.py --lr 'PolynomialDecay'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 21:50:10.634958 1327 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0508 21:50:10.639853 1327 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.9325 - 753ms/step Eval samples: 1763登录后复制

3.11 Loss自适应的学习率

使用方法为:paddle.optimizer.lr.ReduceOnPlateau(learning_rate, mode='min', factor=0.1, patience=10, threshold=1e-4, threshold_mode='rel', cooldown=0, min_lr=0, epsilon=1e-8, verbose=False)

该接口提供Loss自适应学习率的策略。如果 loss 停止下降超过patience个epoch,学习率将会衰减为 learning_rate * factor。每降低一次学习率后,将会进入一个时长为cooldown个epoch的冷静期,在冷静期内,将不会监控loss的变化情况,也不会衰减。在冷静期之后,会继续监控loss的上升或下降。

In [11]## 开始训练!python code/train_reduceonplateau.py登录后复制

可视化结果

图11 ReduceOnPlateau训练验证图

In [22]## 查看测试集上的效果!python code/test.py --lr 'ReduceOnPlateau'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 00:26:51.685511 20016 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 11.0, Runtime API Version: 10.1W0508 00:26:51.690647 20016 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.9365 - 706ms/step Eval samples: 1763登录后复制

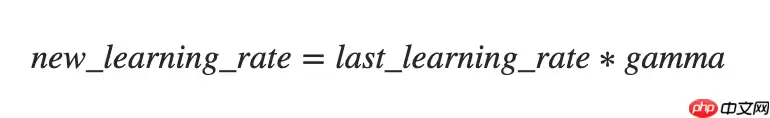

3.12 阶段衰减学习率

使用方法为:paddle.optimizer.lr.StepDecay(learning_rate, step_size, gamma=0.1, last_epoch=-1, verbose=False)

该接口提供一种学习率按指定间隔轮数衰减的策略。

In [12]## 开始训练!python code/train.py --lr 'StepDecay'登录后复制

可视化结果

图12 StepDecay训练验证图

In [ ]## 查看测试集上的效果!python code/test.py --lr 'StepDecay'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0507 23:30:52.671571 14817 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 11.0, Runtime API Version: 10.1W0507 23:30:52.676681 14817 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.5315 - 733ms/step Eval samples: 1763登录后复制

4. 自定义学习率算法

自定义学习率除了可以使用Paddle2.0中提供的学习率算法基类paddle.optimizer.lr.LRScheduler以外,还可以完全不依赖基类自定义学习率,这里采用自定义的方法实现循环学习率算法CLR和采用基类paddle.optimizer.lr.LRScheduler实现每隔一定epoch衰减学习率的Adjust_lr。

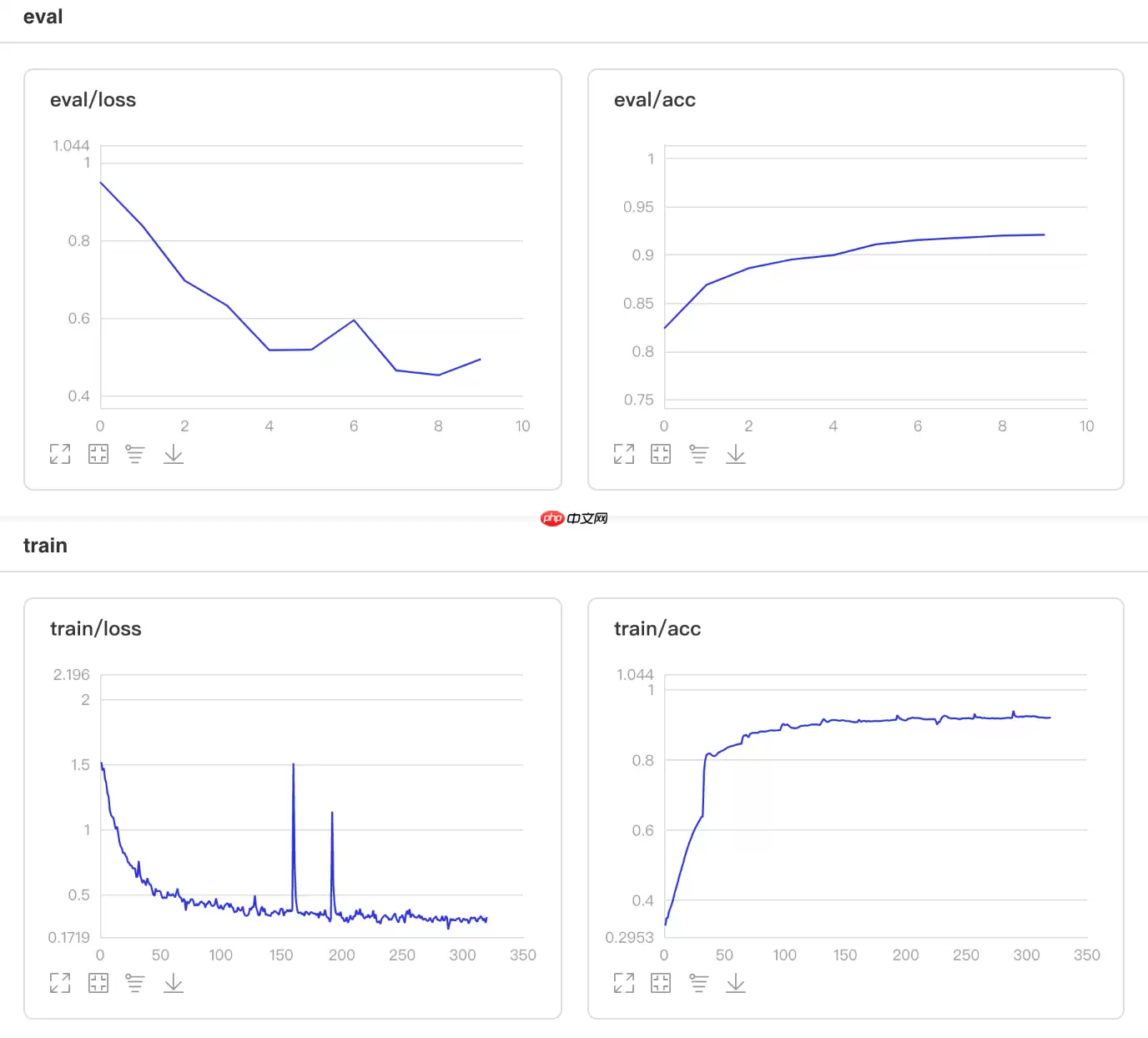

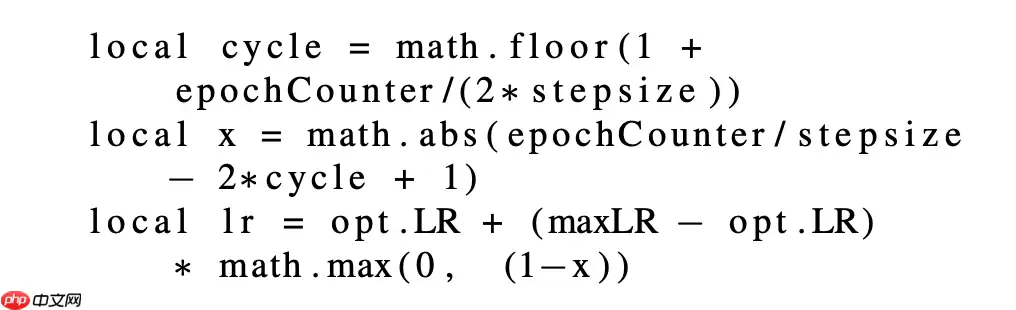

4.1 自定义学习率算法CLR

CLR方法来自于论文Cyclical Learning Rates for Training Neural Networks

根据论文的描述,CLR方法可以在训练过程中周期性的增大和减小学习率,从而使学习率始终在最优点附近徘徊。

更新公式如下:

In [13]

In [13]## 开始训练!python code/train_clr.py登录后复制

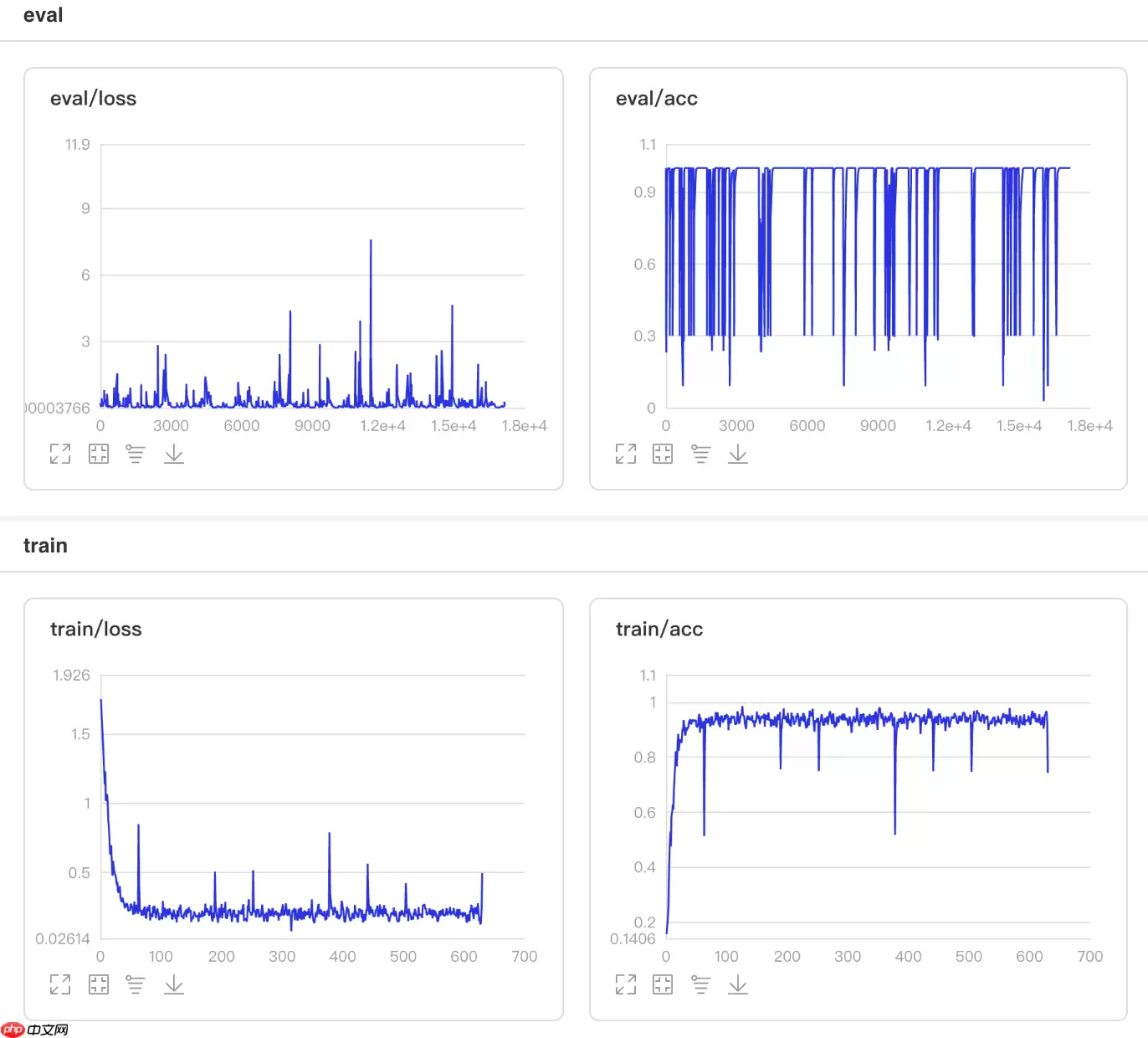

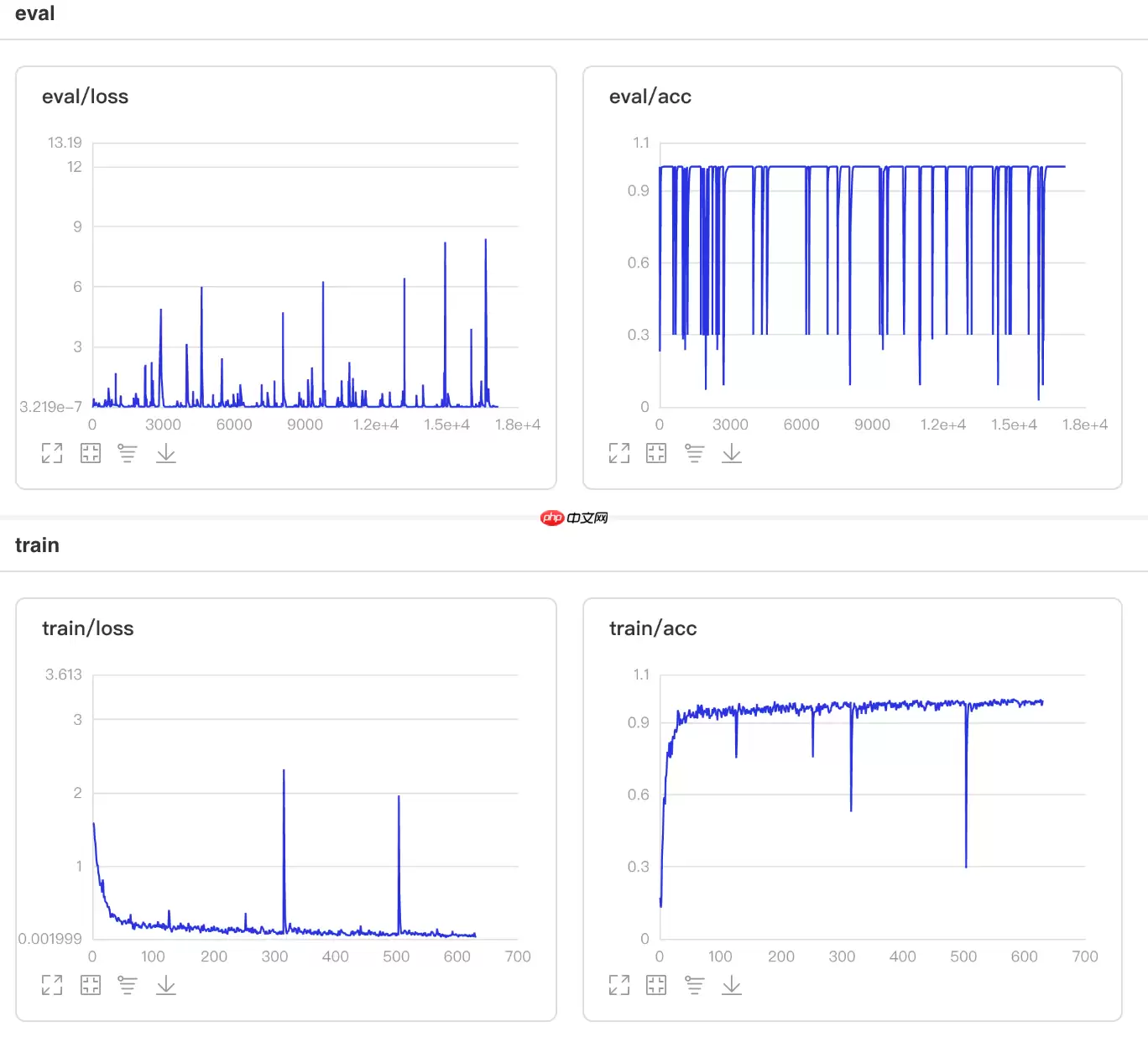

可视化结果

图13 CLR训练验证图

In [1]## 查看测试集上的效果!python code/test.py --lr 'clr'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0508 21:37:39.301434 105 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1W0508 21:37:39.306394 105 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.9575 - 731ms/step Eval samples: 1763登录后复制

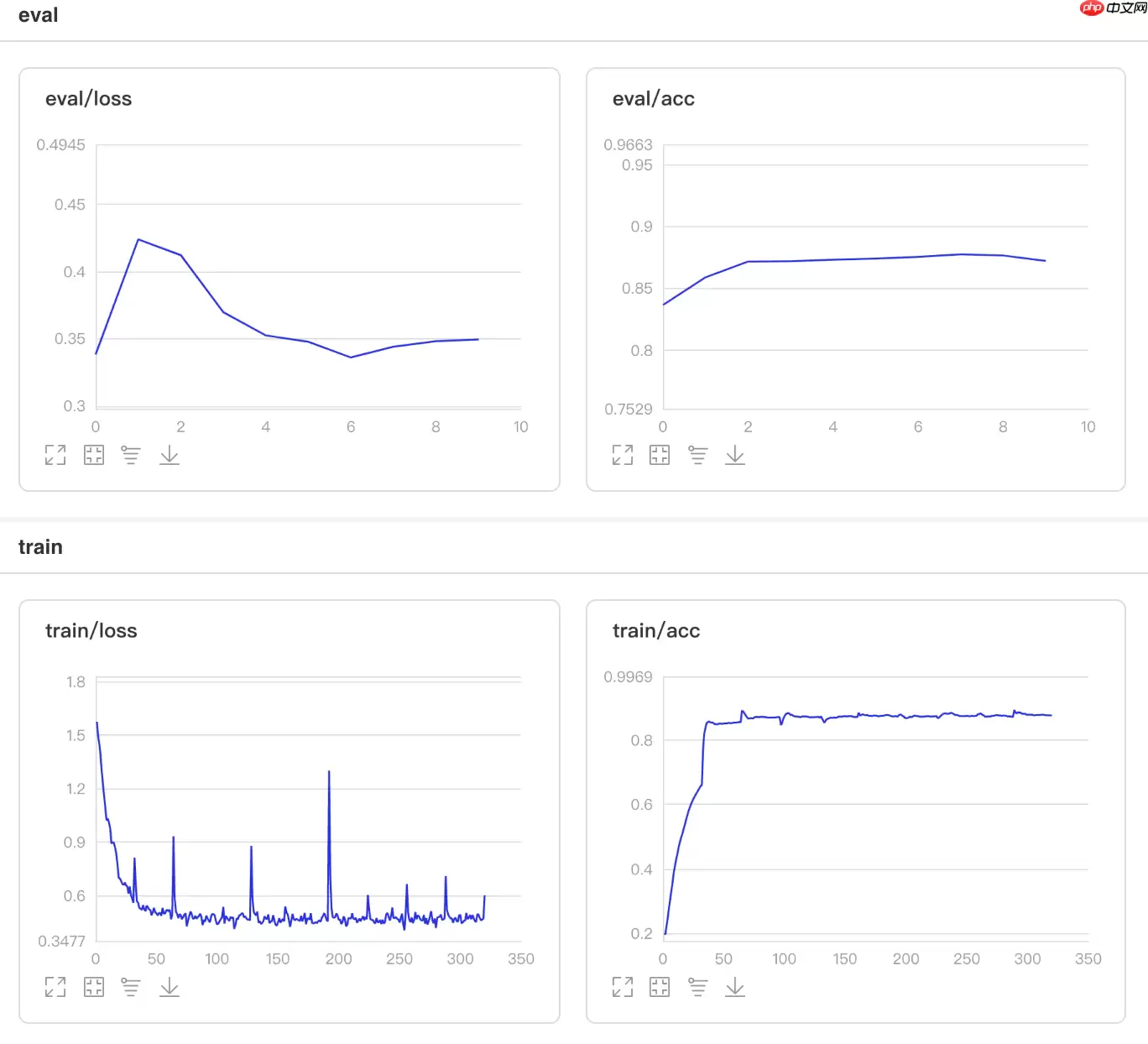

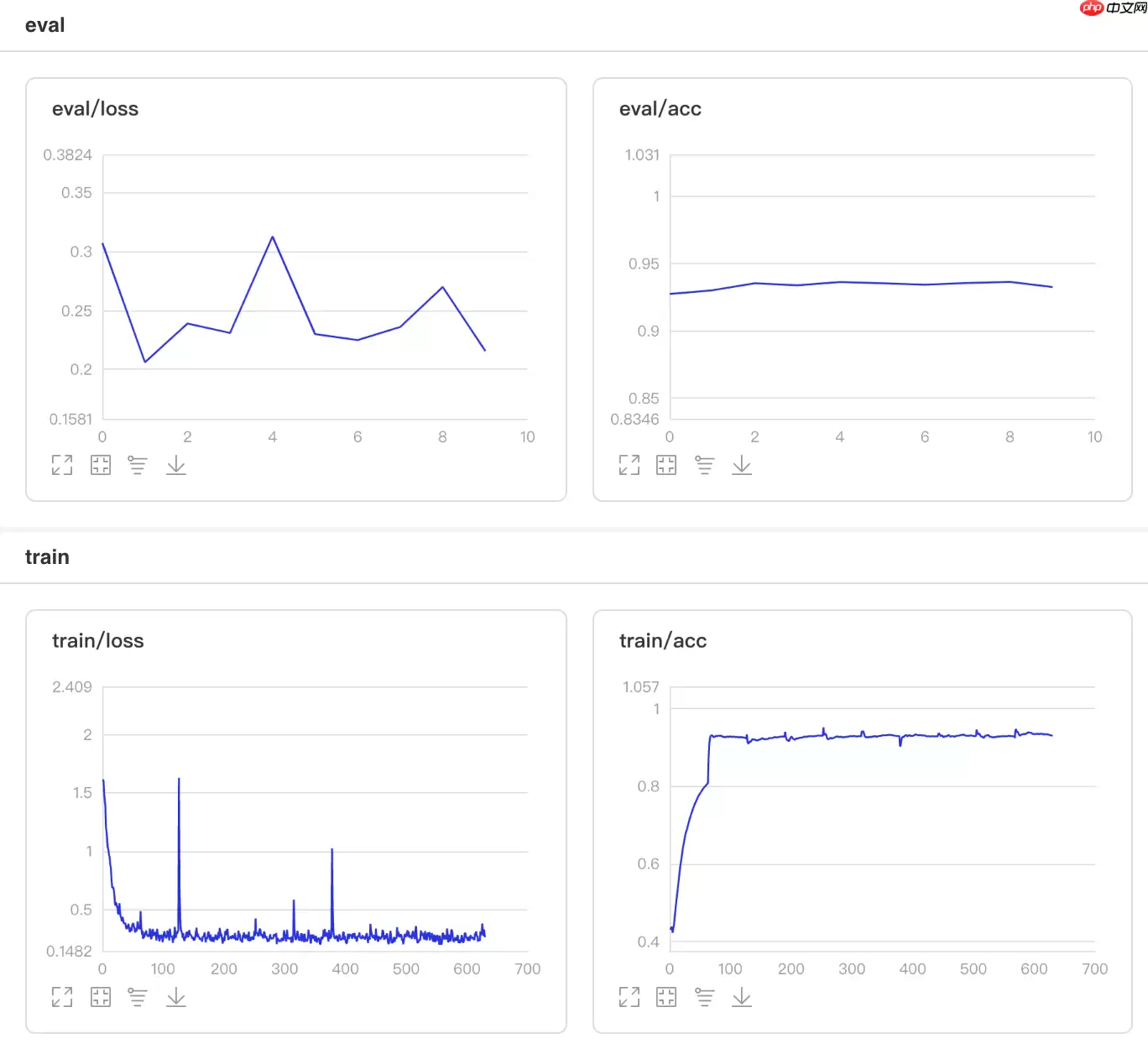

4.2 自定义学习率算法Adjust_lr

这里实现的Adjust_lr方法的更新算法是:在整个10个epoch里,每隔2个epoch将学习率衰减为原来的0.95倍。

In [1]## 开始训练!python code/train.py --lr 'Adjust_lr'登录后复制

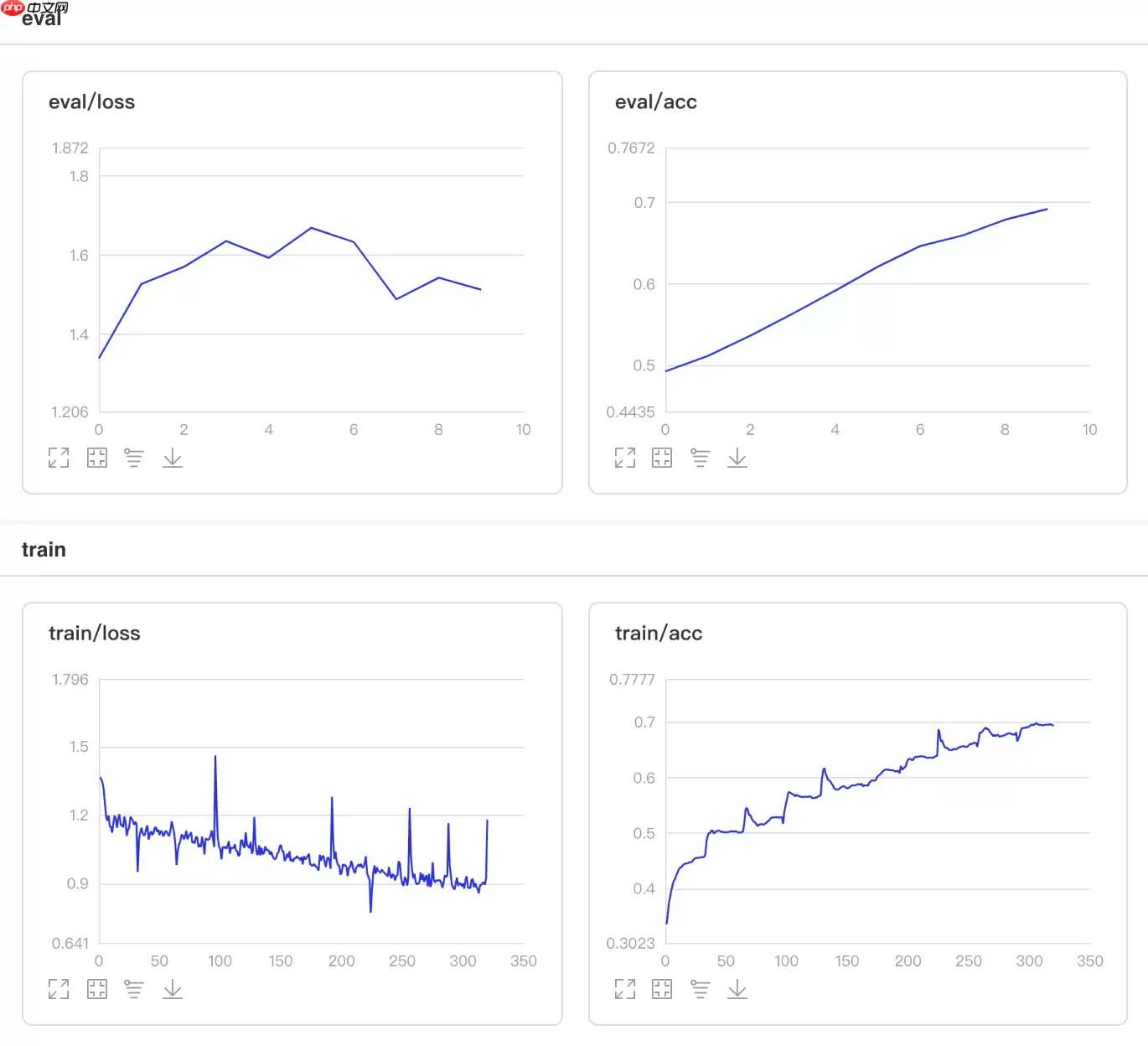

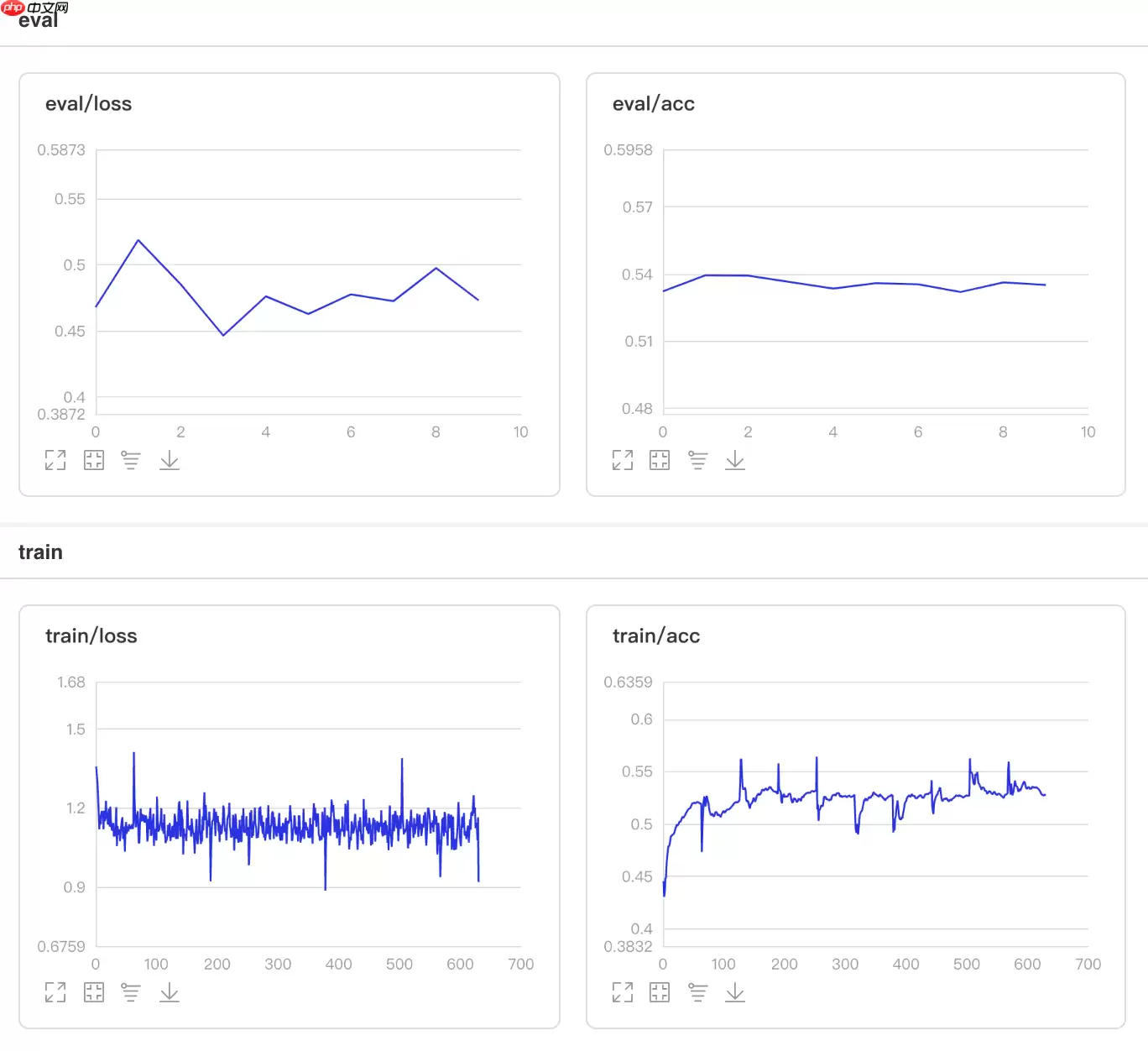

可视化结果

图14 Adjust_lr训练验证图

In [2]## 查看测试集上的效果!python code/test.py --lr 'Adjust_lr'登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:26: DeprecationWarning: `np.int` is a deprecated alias for the builtin `int`. To silence this warning, use `int` by itself. Doing this will not modify any behavior and is safe. When replacing `np.int`, you may wish to use e.g. `np.int64` or `np.int32` to specify the precision. If you wish to review your current use, check the release note link for additional information.Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations def convert_to_list(value, n, name, dtype=np.int):W0511 21:55:19.051586 6496 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 11.0, Runtime API Version: 10.1W0511 21:55:19.055864 6496 device_context.cc:372] device: 0, cuDNN Version: 7.6.Eval begin...The loss value printed in the log is the current batch, and the metric is the average value of previous step.step 28/28 [==============================] - acc: 0.9308 - 713ms/step Eval samples: 1763登录后复制

5. 结果比较

本项目中所有学习率的性能比较如下图所示,其中自定义的循环学习率CLR效果最好,建议使用。其次为LinearWarmup 、NoamDecay、CosineAnnealingDecay和PiecewiseDecay,值得尝试。

图14 学习率性能比较

相关攻略

Pywinrm 通过Windows远程管理(WinRM)协议,让Python能够像操作本地一样执行远程Windows命令,真正打通了跨平台管理的最后一公里。 在混合IT环境中,Linux机器管理Wi

早些时候,聊过 Python 领域那场惊心动魄的供应链攻击。当时我就感叹,虽然我们 JavaScript 开发者对这类套路烂熟于心,但亲眼目睹这种规模的“投毒”还是头一次。 早些时候,聊过 Pyth

Toga 是 BeeWare 家族的核心成员,号称“写一次,跑遍所有平台”,而且用的是系统原生控件,不是那种一看就是网页套壳的界面 。 写了这么多年 Python,你是不是也想过:要是能一套代码跑

异常处理的核心:让错误在正确的地方被有效处理。正确的地方,就是别在底层就把异常吞了,也别在顶层还抛裸奔的 Exception。 异常处理写得好,半夜不用起来改 bug。1 你是不是也这么干过?tr

1 Skills机制概述 提起OpenClaw的Skills机制,不少人可能会把它想象成传统意义上的可执行插件。其实,它的内涵要更精妙一些。 简单说,Skills本质上是一套基于提示驱动的能力扩展机制。它并不是一个可以独立“跑”起来的程序模块,而是通过一份结构化描述文件(核心就是那个SKILL m

热门专题

热门推荐

加密货币行业翘首以盼的监管里程碑,终于有了实质性进展。美国证券交易委员会(SEC)主席保罗·阿特金斯(Paul Atkins)近日证实,那份允许加密项目在早期获得注册豁免权的“安全港”框架提案,已经正式送抵白宫,进入了最终审查阶段。 在范德堡大学与区块链协会联合举办的数字资产峰会上,阿特金斯透露了这

微策略Strategy报告:第一季录得144 6亿美元浮亏 再斥资约3 3亿美元买进4871枚比特币 市场震荡的威力有多大?看看Strategy的最新季报就明白了。根据其最新向美国证管会(SEC)提交的8-K报告,受市场剧烈波动影响,这家公司所持的比特币在第一季度录得了一笔惊人的数字——144 6亿

稳定币巨头Tether的动向,向来是加密世界的风向标。这不,它向Web3基础设施的版图扩张,又迈出了关键一步。公司执行长Paolo Ardoino在社交平台X上透露,其工程团队正在全力“烹制”一个新项目——去中心化搜索引擎 “Hypersearch”。这个消息一出,立刻引发了行业的广泛猜想。 采用D

基地位于Coinbase旗下以太坊Layer2网络Base的Seamless Protocol,日前正式宣告了服务的终结。这个曾经吸引了超过20万用户的原生DeFi借贷协议,在运营不到三年后,终究没能跑赢时间。它主打的核心产品是Integrated Leverage Markets(ILMs)——一

PAAL代币揭秘:深度解析Web3社区治理的核心钥匙 在去中心化自治组织的浪潮中,谁真正掌握了项目的话语权?PAAL代币提供了一套系统化的答案。它不仅是生态内流转的价值媒介,更是开启链上治理大门的核心凭证。通过持有并质押PAAL代币,用户能够对协议升级、资金分配乃至战略方向等关键事务投出决定性的一票