基于姿态语音打造超级玛丽新玩法

2024 PaddlePaddle Hackathon 飞桨黑客马拉松,是由飞桨联合深度学习技术及应用国家工程实验室主办,联合 OpenVINO、MLFlow、KubeFlow、TVM 等开源项目共同出品,面向全球开发者的深度学习领域编程活动,旨在鼓励开发者了解与参与深度学习开源项目。

飞桨黑客马拉松比赛介绍

2024 PaddlePaddle Hackathon 飞桨黑客马拉松,是由飞桨联合深度学习技术及应用国家工程实验室主办,联合 OpenVINO、MLFlow、KubeFlow、TVM 等开源项目共同出品,面向全球开发者的深度学习领域编程活动,旨在鼓励开发者了解与参与深度学习开源项目。

参赛项目介绍

本项目基于姿态估计和语音关键词分类模型打造了一款简单实用的人机交互新玩法。

免费影视、动漫、音乐、游戏、小说资源长期稳定更新! 👉 点此立即查看 👈

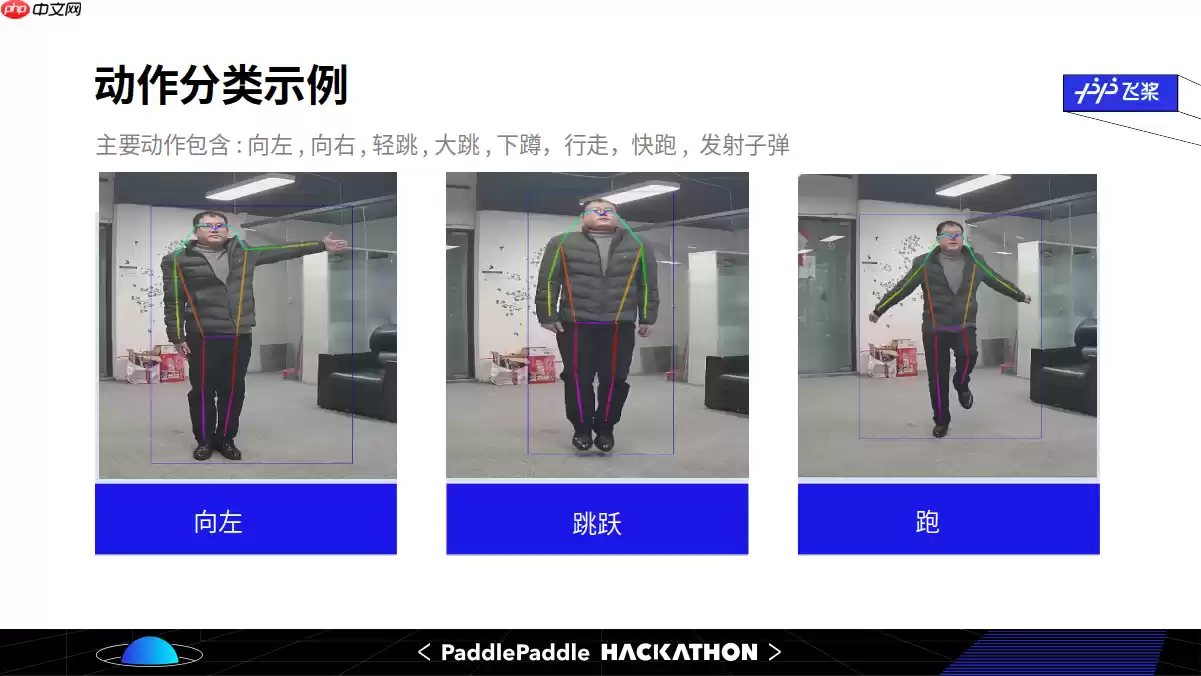

项目演示基于PyGame超级玛丽(PS: 有兴趣的小伙伴可以尝试其他好玩的游戏), 通过姿态估计模型提取几何太特征和运动特征翻译人体姿势指令,整个过程运动量还是比较大,很适合娱乐的同时减肥健身; 另一方面运动累了也可以切换到语音模式,让人机交互更接近真实感。

基于本项目小伙伴还可以发挥更多的想象,比如练习外语,健身APP, 抑或是用PaddleGAN来点元宇宙的错觉,抑或是玩玩真机网友之类, 等等等等....

本项目的GitHub地址: https://github.com/thunder95/Play_Mario_With_PaddlePaddle

注意: 两天参赛时间现撸代码,还存在很多瑕疵,所以本项目还在持续优化过程中,欢迎大家提出宝贵的意见,互相学习交流。

B站视频体验如下:

b站视频链接:https://www.bilibili.com/video/BV1B64y1i7GM

功能模块

超级玛丽游戏

一款载着满满儿时记忆的游戏, 在GitHub已有大佬基于PyGame已经完美复现, 作者已经实现到了第4关。

GitHub地址: https://github.com/justinmeister/Mario-Level-1

本项目对于交互部分做了少量的修改, 原项目是通过PyGame监听的按键操作,在本项目中将其他模块的指令放到队列中替代按键信号。

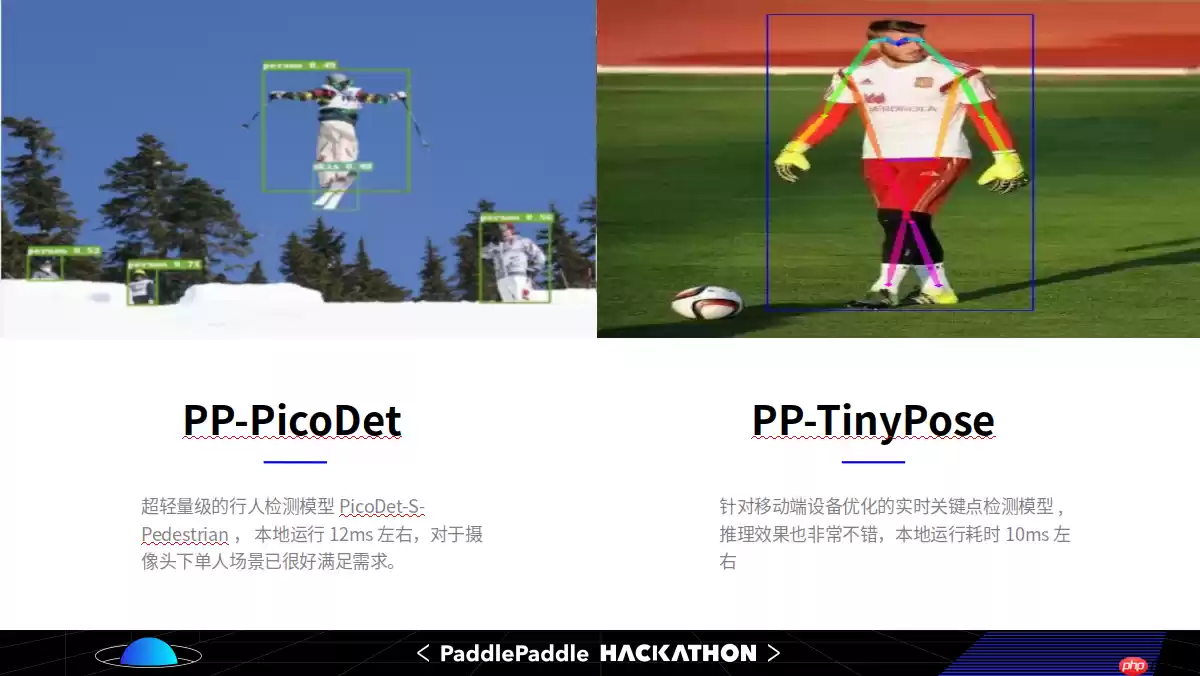

人体关键点估计

因人机交互对模型推理的高实时性要求,调研过多个模型之后, 最终选型采用的是PaddleDetection开源的PicoDet-S-Pedestrian以及PP-TinyPose, 模型推理时间单帧20ms左右,速度和效果都能满足要求。

PP-TinyPose是PaddleDetecion针对移动端设备优化的实时姿态检测模型,可流畅地在移动端设备上执行多人姿态估计任务。借助PaddleDetecion自研的优秀轻量级检测模型PicoDet,我们同时提供了特色的轻量级垂类行人检测模型。

PP-TinyPose 链接: https://github.com/PaddlePaddle/PaddleDetection/tree/release/2.3/configs/keypoint/tiny_pose

考虑到额外的动作模型会增加指令的延迟,本项目只是将得到的关键点基于坐标信息进行简单的分类,基本也能满足需求。

In [ ]

In [ ]!git clone PaddleDetection%cd PaddleDetection!python3 deploy/python/det_keypoint_unite_infer.py --det_model_dir=outut_inference/picodet_s_192_pedestrian --keypoint_model_dir=outut_inference/tinypose_128x96 --image_file=demo/000000014439.webp --device=GPU登录后复制

语音分类训练

语音样本采集

目前AIStudio不支持在线采集,可以下载代码到本地运行:

!python speech_cmd_cls/generate_data.py

借助PyAudio第三方库, 上述语音采集脚本可自动录制声音,语音只需要采集游戏玩家7个关键字的声音,并以500ms间隔切割保存到对应目录,每个关键字大概录制2~3分钟就够了。时间充分的话,也可以按需扩充样本。

语音数据清洗

对于无声的、电流声的、或是听起来不清晰的录音片段,需要移动到第8个目录(名称: 其他)

语音数据预处理

借助第三方库librosa, 加载音频文件,提取melspectrogram特征,并过滤掉一些低分贝音频帧。

!python speech_cmd_cls/preprocess.py

ps: 文件夹下speech_cmd_cls/data是录制的作者的语音,方便大家测试。

In [ ]#数据预处理!unzip speech_cmd_cls.zip%cd speech_cmd_cls/!python preprocess.py登录后复制

/home/aistudio/speech_cmd_cls标签名: ['左', '右', '下', '停', '跑', '跳', '打', '其它']preprocess data finished登录后复制In [ ]

#简单搭建一个自定义带注意力的LSTM网络结构from paddle import nnclass SpeechCommandModel(nn.Layer): def __init__(self, num_classes=10): super(SpeechCommandModel, self).__init__() self.conv1 = nn.Conv2D(126, 10, (5, 1), padding="SAME") self.relu1 = nn.ReLU() self.bn1 = nn.BatchNorm2D(10) self.conv2 = nn.Conv2D(10, 1, (5, 1), padding="SAME") self.relu2 = nn.ReLU() self.bn2 = nn.BatchNorm2D(1) self.lstm1 = nn.LSTM(input_size=80, hidden_size=64, direction="bidirect") self.lstm2 = nn.LSTM(input_size=128, hidden_size=64, direction="bidirect") self.query = nn.Linear(128, 128) self.softmax = nn.Softmax(axis=-1) self.fc1 = nn.Linear(128, 64) self.fc1_relu = nn.ReLU() self.fc2 = nn.Linear(64, 32) self.classifier = nn.Linear(32, num_classes) self.cls_softmax = nn.Softmax(axis=-1) def forward(self, x): x = self.conv1(x) x = self.relu1(x) x = self.bn1(x) x = self.conv2(x) x = self.relu2(x) x = self.bn2(x) x = x.squeeze(axis=-1) x, _ = self.lstm1(x) x, _ = self.lstm2(x) x = x.squeeze(axis=1) q = self.query(x) attScores = paddle.matmul(q, x, transpose_y=True) attScores = self.softmax(attScores) attVector = paddle.matmul(attScores, x) output = self.fc1(attVector) output = self.fc1_relu(output) output = self.fc2(output) output = self.classifier(output) output = self.cls_softmax(output) return outputmodel = SpeechCommandModel(num_classes = 8)print(model)登录后复制

SpeechCommandModel( (conv1): Conv2D(126, 10, kernel_size=[5, 1], padding=SAME, data_format=NCHW) (relu1): ReLU() (bn1): BatchNorm2D(num_features=10, momentum=0.9, epsilon=1e-05) (conv2): Conv2D(10, 1, kernel_size=[5, 1], padding=SAME, data_format=NCHW) (relu2): ReLU() (bn2): BatchNorm2D(num_features=1, momentum=0.9, epsilon=1e-05) (lstm1): LSTM(80, 64 (0): BiRNN( (cell_fw): LSTMCell(80, 64) (cell_bw): LSTMCell(80, 64) ) ) (lstm2): LSTM(128, 64 (0): BiRNN( (cell_fw): LSTMCell(128, 64) (cell_bw): LSTMCell(128, 64) ) ) (query): Linear(in_features=128, out_features=128, dtype=float32) (softmax): Softmax(axis=-1) (fc1): Linear(in_features=128, out_features=64, dtype=float32) (fc1_relu): ReLU() (fc2): Linear(in_features=64, out_features=32, dtype=float32) (classifier): Linear(in_features=32, out_features=8, dtype=float32) (cls_softmax): Softmax(axis=-1))登录后复制

模型训练

使用飞桨的高层API对语音网络进行训练, 训练的准确率在95%左右

即使没有GPU在飞桨框架下训练这个小网络也非常的快。

!python speech_cmd_cls/train.py

In [18]!python train.py登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/setuptools/depends.py:2: DeprecationWarning: the imp module is deprecated in favour of importlib; see the module's documentation for alternative uses import impThe loss value printed in the log is the current step, and the metric is the average value of previous steps.Epoch 1/20/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:77: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working return (isinstance(seq, collections.Sequence) and/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/nn/layer/norm.py:653: UserWarning: When training, we now always track global mean and variance. "When training, we now always track global mean and variance.")step 193/193 [==============================] - loss: 1.2740 - acc: 0.9538 - 17ms/step Eval begin...step 22/22 [==============================] - loss: 1.6995 - acc: 0.9657 - 6ms/step Eval samples: 175Epoch 2/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9551 - 16ms/step Eval begin...step 22/22 [==============================] - loss: 1.5585 - acc: 0.9714 - 6ms/step Eval samples: 175Epoch 3/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9525 - 16ms/step Eval begin...step 22/22 [==============================] - loss: 1.4175 - acc: 0.9771 - 6ms/step Eval samples: 175Epoch 4/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9564 - 14ms/step Eval begin...step 22/22 [==============================] - loss: 1.5593 - acc: 0.9714 - 6ms/step Eval samples: 175Epoch 5/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9538 - 13ms/step Eval begin...step 22/22 [==============================] - loss: 1.3246 - acc: 0.9714 - 5ms/step Eval samples: 175Epoch 6/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9447 - 14ms/step Eval begin...step 22/22 [==============================] - loss: 1.5576 - acc: 0.9714 - 6ms/step Eval samples: 175Epoch 7/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9460 - 14ms/step Eval begin...step 22/22 [==============================] - loss: 1.4488 - acc: 0.9714 - 6ms/step Eval samples: 175Epoch 8/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9525 - 15ms/step Eval begin...step 22/22 [==============================] - loss: 1.7026 - acc: 0.9429 - 6ms/step Eval samples: 175Epoch 9/20step 193/193 [==============================] - loss: 1.7740 - acc: 0.9389 - 15ms/step Eval begin...step 22/22 [==============================] - loss: 1.7024 - acc: 0.9486 - 6ms/step Eval samples: 175Epoch 10/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9460 - 14ms/step Eval begin...step 22/22 [==============================] - loss: 1.5597 - acc: 0.9543 - 6ms/step Eval samples: 175Epoch 11/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9467 - 15ms/step Eval begin...step 22/22 [==============================] - loss: 1.5596 - acc: 0.9657 - 6ms/step Eval samples: 175Epoch 12/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9506 - 14ms/step Eval begin...step 22/22 [==============================] - loss: 1.5625 - acc: 0.9714 - 6ms/step Eval samples: 175Epoch 13/20step 193/193 [==============================] - loss: 1.7740 - acc: 0.9571 - 14ms/step Eval begin...step 22/22 [==============================] - loss: 1.5593 - acc: 0.9657 - 6ms/step Eval samples: 175Epoch 14/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9525 - 14ms/step Eval begin...step 22/22 [==============================] - loss: 1.6989 - acc: 0.9600 - 6ms/step Eval samples: 175Epoch 15/20step 193/193 [==============================] - loss: 1.7740 - acc: 0.9512 - 14ms/step Eval begin...step 22/22 [==============================] - loss: 1.8454 - acc: 0.9543 - 6ms/step Eval samples: 175Epoch 16/20step 193/193 [==============================] - loss: 1.7740 - acc: 0.9473 - 15ms/step Eval begin...step 22/22 [==============================] - loss: 1.7026 - acc: 0.9543 - 6ms/step Eval samples: 175Epoch 17/20step 193/193 [==============================] - loss: 1.2741 - acc: 0.9519 - 15ms/step Eval begin...step 22/22 [==============================] - loss: 1.3661 - acc: 0.9771 - 6ms/step Eval samples: 175Epoch 18/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9590 - 15ms/step Eval begin...step 22/22 [==============================] - loss: 1.4335 - acc: 0.9714 - 6ms/step Eval samples: 175Epoch 19/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9590 - 14ms/step Eval begin...step 22/22 [==============================] - loss: 1.6870 - acc: 0.9657 - 6ms/step Eval samples: 175Epoch 20/20step 193/193 [==============================] - loss: 1.2740 - acc: 0.9545 - 15ms/step Eval begin...step 22/22 [==============================] - loss: 1.6629 - acc: 0.9486 - 6ms/step Eval samples: 175登录后复制

模型评估和预测

训练完成可以对模型进行初步评估,也可以线下使用麦克风对模型效果进行实时验证

!python speech_cmd_cls/eval.py

!python speech_cmd_cls/realtime_infer.py

特别注意: 即使在验证集上训练出效果不错的模型,但是在这个小网络和小数据集上泛化能力相对较弱,当更换设备,更换说话人,或是更换到不同噪音背景的环境,效果可能会有些不理想。

In [20]!python eval.py登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/setuptools/depends.py:2: DeprecationWarning: the imp module is deprecated in favour of importlib; see the module's documentation for alternative uses import impEval begin.../opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:77: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working return (isinstance(seq, collections.Sequence) andstep 3/3 - loss: 1.3763 - acc: 0.9543 - 27ms/stepEval samples: 175{'loss': [1.3763338], 'acc': 0.9542857142857143}登录后复制 相关攻略

Pywinrm 通过Windows远程管理(WinRM)协议,让Python能够像操作本地一样执行远程Windows命令,真正打通了跨平台管理的最后一公里。 在混合IT环境中,Linux机器管理Wi

早些时候,聊过 Python 领域那场惊心动魄的供应链攻击。当时我就感叹,虽然我们 JavaScript 开发者对这类套路烂熟于心,但亲眼目睹这种规模的“投毒”还是头一次。 早些时候,聊过 Pyth

Toga 是 BeeWare 家族的核心成员,号称“写一次,跑遍所有平台”,而且用的是系统原生控件,不是那种一看就是网页套壳的界面 。 写了这么多年 Python,你是不是也想过:要是能一套代码跑

异常处理的核心:让错误在正确的地方被有效处理。正确的地方,就是别在底层就把异常吞了,也别在顶层还抛裸奔的 Exception。 异常处理写得好,半夜不用起来改 bug。1 你是不是也这么干过?tr

1 Skills机制概述 提起OpenClaw的Skills机制,不少人可能会把它想象成传统意义上的可执行插件。其实,它的内涵要更精妙一些。 简单说,Skills本质上是一套基于提示驱动的能力扩展机制。它并不是一个可以独立“跑”起来的程序模块,而是通过一份结构化描述文件(核心就是那个SKILL m

热门专题

热门推荐

加密货币行业翘首以盼的监管里程碑,终于有了实质性进展。美国证券交易委员会(SEC)主席保罗·阿特金斯(Paul Atkins)近日证实,那份允许加密项目在早期获得注册豁免权的“安全港”框架提案,已经正式送抵白宫,进入了最终审查阶段。 在范德堡大学与区块链协会联合举办的数字资产峰会上,阿特金斯透露了这

微策略Strategy报告:第一季录得144 6亿美元浮亏 再斥资约3 3亿美元买进4871枚比特币 市场震荡的威力有多大?看看Strategy的最新季报就明白了。根据其最新向美国证管会(SEC)提交的8-K报告,受市场剧烈波动影响,这家公司所持的比特币在第一季度录得了一笔惊人的数字——144 6亿

稳定币巨头Tether的动向,向来是加密世界的风向标。这不,它向Web3基础设施的版图扩张,又迈出了关键一步。公司执行长Paolo Ardoino在社交平台X上透露,其工程团队正在全力“烹制”一个新项目——去中心化搜索引擎 “Hypersearch”。这个消息一出,立刻引发了行业的广泛猜想。 采用D

基地位于Coinbase旗下以太坊Layer2网络Base的Seamless Protocol,日前正式宣告了服务的终结。这个曾经吸引了超过20万用户的原生DeFi借贷协议,在运营不到三年后,终究没能跑赢时间。它主打的核心产品是Integrated Leverage Markets(ILMs)——一

PAAL代币揭秘:深度解析Web3社区治理的核心钥匙 在去中心化自治组织的浪潮中,谁真正掌握了项目的话语权?PAAL代币提供了一套系统化的答案。它不仅是生态内流转的价值媒介,更是开启链上治理大门的核心凭证。通过持有并质押PAAL代币,用户能够对协议升级、资金分配乃至战略方向等关键事务投出决定性的一票